Overview of Solar Radiation Resource Concepts

1.1 Introduction

This chapter discusses standard terms that are used to illustrate the key characteristics of solar radiation—the fuel for all solar technologies.

Beginning with the Sun as the source, this chapter presents an overview of the effects of Earth’s orbit and atmosphere on the possible types and magnitudes of solar radiation available for energy conversion. This overview concludes with an important discussion of the estimated uncertainties associated with solar resource data as affected by the experimental and modeling methods used to produce the data.

1.2 Radiometric Terminology

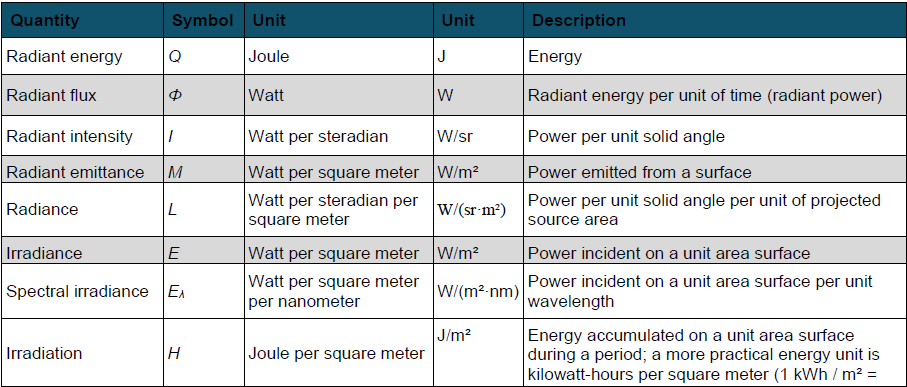

Before discussing solar radiation further, it is important to understand basic radiometric terms. Radiant energy, flux, power, and other concepts used in this course are summarized in Table 1-1.

Table 1-1. Radiometric Terminology and Units

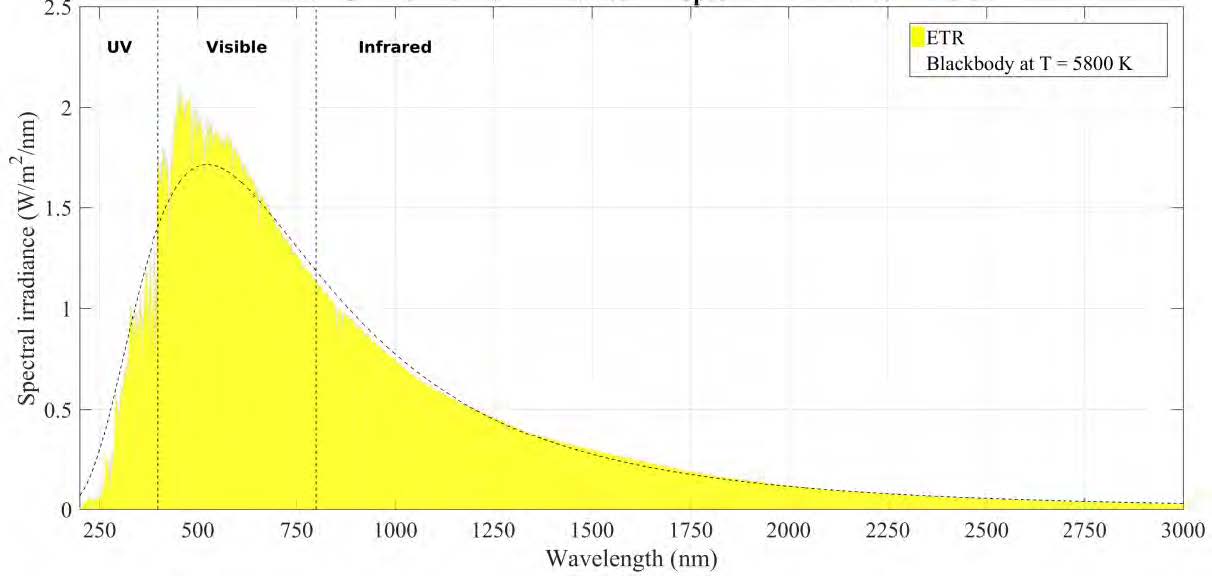

1.3 Extraterrestrial Irradiance

Any object with a temperature above absolute zero Kelvin emits radiation. With an effective surface temperature of ≈5800 K, the Sun behaves like a quasi-static blackbody and emits radiation over a wide range of wavelengths, with a distribution that is close to that predicted by Planck’s law (Figure 1-1). This constitutes the solar spectral power distribution, or solar spectrum. For terrestrial applications, the useful solar spectrum, also called the shortwave spectrum (≈290–4000 nm), includes the spectral regions called ultraviolet (UV), visible, and near-infrared (NIR) (Figure 1-1). The latter is the part of the infrared spectrum that is below 4000 nm in the solar spectrum. In contrast, the longwave (or far-infrared) spectrum extends beyond 4 μm, where the planetary thermal emission is dominant. Based on a recent determination (Gueymard 2018a), most spectral irradiance (98.5%) of the extraterrestrial spectrum (ETS), is contained in the wavelength range from 290–4000 nm. In what follows, broadband solar radiation will always refer to this spectral range, unless specified otherwise.

Various ETS distributions have been derived based on ground measurements, extraterrestrial measurements, and physical models of the Sun’s output. Some historical perspective is offered by Gueymard (2004, 2006, 2018a). All distributions contain some deviations from the current standard extraterrestrial spectra used by ASTM Standard E490 (2019) (Figure 1-1). A new generation of ETS distribution, based on recent spectral measurements from space, was recently published (Gueymard 2018a).

1.4 Solar Constant and Total Solar Irradiance

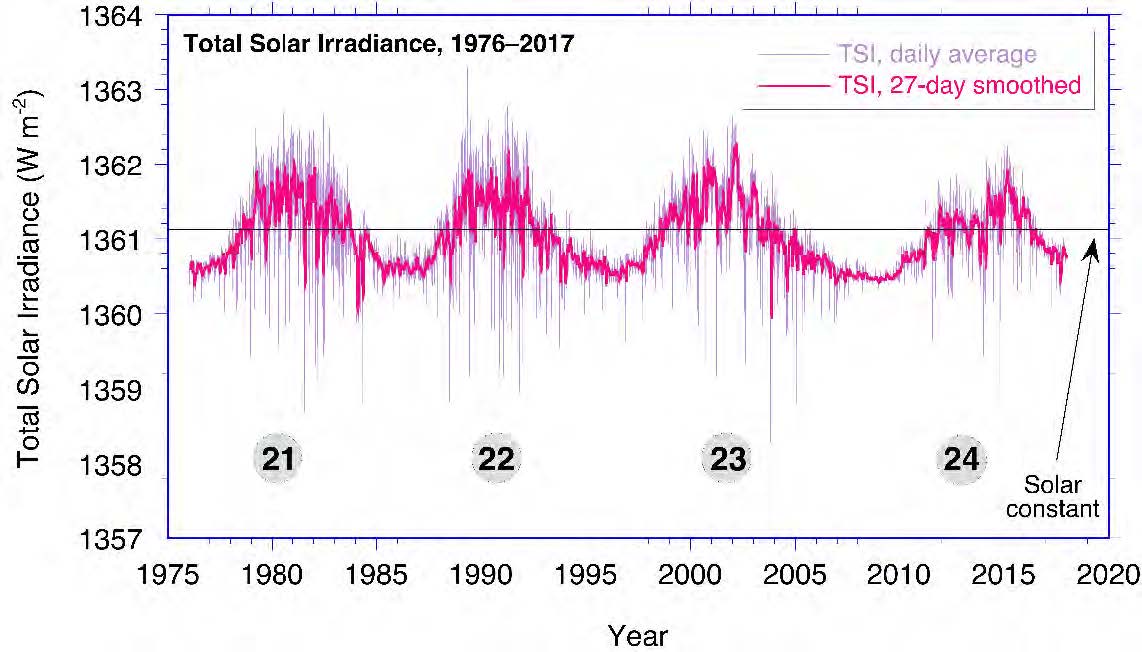

The total radiant power from the Sun is nearly constant. The solar output (radiant emittance) is called the total solar irradiance (TSI) and can be obtained as the integration of the ETS at 1 AU (astronomical unit, approximate average Sun-Earth distance) over all wavelengths. TSI was commonly called the solar constant (SC) until slight temporal variations were discovered (Fröhlich and Lean 1998, 2004; Kopp and Lean 2011). The solar constant is now defined as the long-term mean TSI. Both TSI and solar constant are made independent from the actual Sun-Earth distance by evaluating them at 1 AU. Small but measurable changes in the Sun’s output and TSI are related to its magnetic activity. There are cycles of approximately 11 years in solar activity, which are accompanied by a varying number of sunspots (cool, dark areas on the Sun) and faculae (hot, bright spots). TSI increases during high-activity periods because the numerous faculae more than counterbalance the effect of sunspots. From an engineering perspective, these TSI variations are relatively small, so the solar constant concept is still useful and perfectly appropriate in solar applications.

Figure 2-2 shows the latest version of a composite TSI time series based on multiple spaceborne instruments that have monitored TSI since 1978 using a variety of instruments and absolute scales (Gueymard 2018b). Estimates are also used for the period from 1976–1978 to make this time series start at the onset of solar cycle 21. The solar cycle numbers are indicated for further reference. (Solar cycle 25 is assumed to have started at the end of 2019, but this is still debated as of this writing.) Figure 2-2 shows the solar constant (always evaluated at 1 AU) as a horizontal solid line.

On a daily basis, the passage of large sunspots results in much lower TSI values than the solar constant. The measured variation in TSI resulting from the sunspot cycle is at most ±0.2%, only twice the precision (i.e., repeatability—not total absolute accuracy, which is approximately ±0.5%) of the most accurate radiometers measuring TSI in space. There is, however, some large variability in a few spectral regions—especially the UV (wavelengths less than 400 nm)—caused by solar activity.

Historic determinations of solar constant have fluctuated throughout time (Gueymard 2006; Kopp 2016). At the onset of the 21st century, it was 1366.1 ± 7 W/m2 (ASTM 2000; Gueymard 2004). More recent satellite observations using advanced sensors and better calibration methods, however, have shown that the solar constant is somewhat lower: ≈1361 W/m2. After careful reexamination and corrections of decades of past satellite-based records, Gueymard (2018b) proposed a revised value of 1361.1 W/m2.

According to astronomical computations such as those made by the National Renewable Energy Laboratory’s (NREL’s) solar position software (https://midcdmz.nrel.gov/spa/), using SC ≈1361 W/m2, the seasonal variation of ±1.7% in the Sun-Earth distance causes the irradiance at the top of the Earth’s atmosphere to vary from ≈1409 W/m2 (+3.5%) near January 3 to ≈1315 W/m2 (–3.3%) near July 4. This seasonal variation is systematic and deterministic; hence, it does not include the daily (somewhat random) or cyclical variability in TSI induced by solar activity, which was discussed previously. This variability is normally less than ±0.2% and is simply ignored in the practice of solar resource assessments.

1.5 Solar Geometry

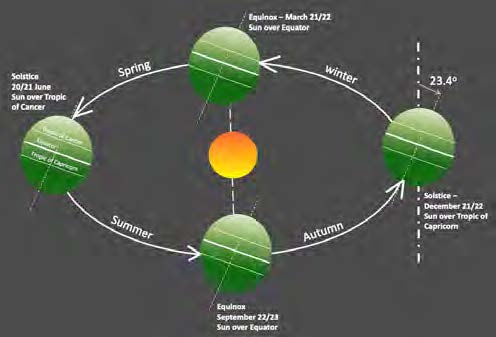

The amount of radiation exchanged between two objects is affected by their separation distance. The Earth’s elliptical orbit (eccentricity 0.0167) brings it closest to the Sun in January and farthest from the Sun in July, as mentioned. The average Sun-Earth distance is close to the new definition of the AU, which is exactly 149,597,870,700 m, as introduced in 2012 by the International Astronomical Union and recognized as a Système International (SI) unit in 2014 by the International Bureau of Weights and Measures (BIPM). Figure 2-3 shows the Earth’s orbit in relation to the northern hemisphere’s seasons, caused by the average ≈23.4° tilt of the Earth’s rotational axis with respect to the plane of the orbit. The solar irradiance available at the top of atmosphere (TOA) is called the extraterrestrial radiation (ETR). ETR (see Eq. 1-1) is the power per unit area, or flux density, in Watts per square meter (W/m2), radiated from the Sun and available at the TOA. Just like ETS, ETR varies with the Sun-Earth distance (r) and annual mean distance (r0):

As indicated in Section 1.4, it is customary to neglect temporal variations in TSI so that TSI can be replaced by the solar constant in Eq. (1-1) for simplification. The Sun-Earth distance correction factor, (r0/r)2, in Eq. 1-1 is normally obtained from sun position algorithms, such as those described in Section 1.6.1. Daily values of sufficient accuracy for most applications can also be found in tabulated form—e.g., Iqbal (2012).

From the TOA, the sun appears as a very bright disk with an angular diameter of ≈0.5° (the actual apparent diameter varies by a small amount, ±1.7%, because the Sun-Earth distance varies) surrounded by a completely black sky, apart from the light coming from stars and planets. This angle can be determined from the distance between the Earth and the Sun and the latter’s apparent diameter. More precisely, a point at the top of the Earth’s atmosphere intercepts a cone of light from the hemisphere of the Sun facing the Earth with a total angle of 0.53°±1.7% at the apex and a divergence angle from the center of the disk of 0.266° (half the apex angle, yearly average). Because the divergence angle is very small, the rays of light emitted by the Sun are nearly parallel; these are called the solar beam. The interaction of the solar beam with the terrestrial atmosphere is discussed next.

1.6 Solar Radiation and the Earth’s Atmosphere

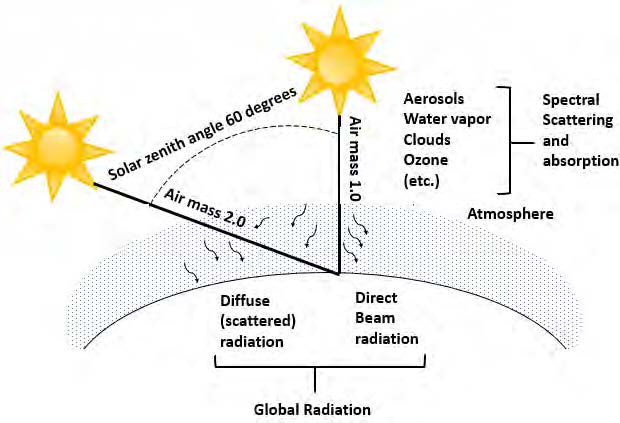

The Earth’s atmosphere can be seen as a continuously variable filter for the solar ETR as it reaches the surface. Figure 1-4 illustrates the “typical” absorption of solar radiation by atmospheric constituents such as ozone, oxygen, water vapor, or carbon dioxide. The amount of atmosphere the solar photons must traverse, also called the atmospheric path length or air mass (AM), depends on the relative position of the observer with respect to the sun’s position in the sky (Figure 1-4). By convention, air mass one (AM1) is defined as the amount of atmospheric path length observed when the sun is directly overhead. As a first approximation, and for low zenith angles, air mass is geometrically related to the solar zenith angle (SZA). Actually, air mass is approximately equal to the secant of SZA, or 1/cos(SZA). Air mass 1.5 (AM1.5) is a key quantity in solar applications and corresponds to SZA = 48.236° (Gueymard, Myers, and Emery, 2002). Air mass two (AM2) occurs when SZA is ≈60° and has twice the path length of AM1. By extrapolation, one refers to the value at the TOA as AM0 (Myers 2013).

The cloudless atmosphere contains gaseous molecules and particulates (e.g., dust and other aerosols) that reduce the ETR as it progresses farther down through the atmosphere. This reduction is caused mostly by scattering (a change of a photon’s direction of propagation) and also by absorption (a capture of radiation). Finally, clouds are the major elements that modify the ETR (also by scattering and absorption) on its way to the surface or to a solar collector.

Absorption converts part of the incoming solar radiation into heat and raises the temperature of the absorbing medium. Scattering redistributes the radiation in the hemisphere of the sky dome above the observer, including reflecting part of the radiation back into space. The longer the path length through the atmosphere, the more radiation is absorbed and scattered. The probability of scattering—and hence of geometric and spatial redistribution of the solar radiation—increases as the path (air mass) from the TOA to the ground increases.

Part of the radiation that reaches the Earth’s surface is eventually reflected back into the atmosphere. A fraction of this returns to the surface through a process known as backscattering. The actual geometry and flux density of the reflected and scattered radiation depend on the reflectivity and physical properties of the surface and constituents in the atmosphere, especially clouds and aerosols.

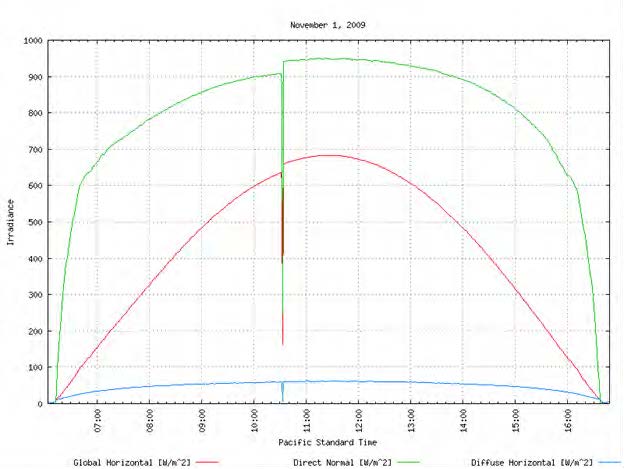

Based on these interactions between the radiation and the atmosphere, the terrestrial solar radiation is divided into two components: direct beam radiation, which refers to solar photons that reach the surface without being scattered or absorbed, and diffuse radiation, which refers to photons that reach the observer after one or more scattering events with atmospheric constituents. These definitions and their usage for solar energy are discussed in detail in Section 1.7.

Ongoing research continues to increase our understanding of the properties of atmospheric constituents, ways to estimate them, and their impact on the magnitude of solar radiation in the atmosphere at various atmospheric levels and at the surface. This is of great importance to those who measure and model solar radiation fluxes.

1.6.1 Relative Motions of the Earth and Sun

The amount of solar radiation available at the TOA is a function of TSI and the Sun-Earth distance at the time of interest, per Eq. (1-1). The slightly elliptical orbit of the Earth around the Sun was briefly described in Section 1.5 and is shown in Figure 1-3. The Earth rotates around an axis through the geographic north and south poles, inclined at an average angle of ≈23.4° to the plane of the Earth’s orbit. The axial tilt of the Earth’s rotation also results in daily variations in the solar geometry during any year.

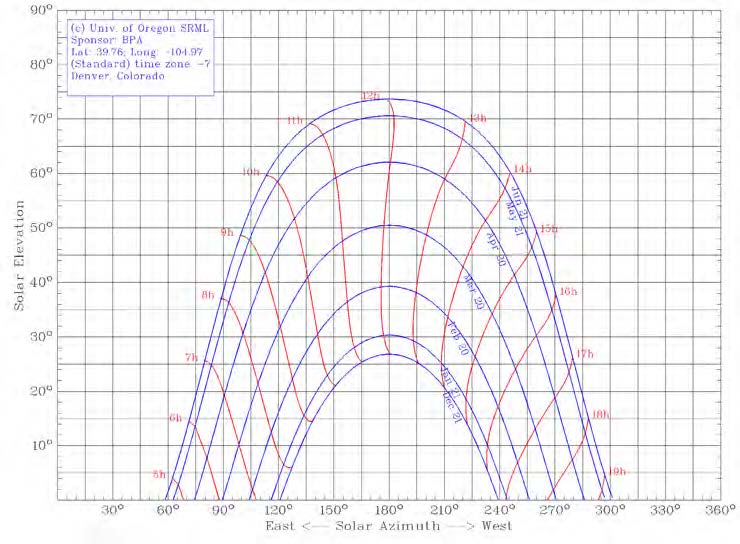

In the Northern Hemisphere, at latitudes above the Tropic of Cancer (23.437° N) near midday, the sun is low on the horizon during winter and high in the sky during summer. Summer days are longer than winter days because of progressive changes where the sun rises and sets. Similar transitions take place in the Southern Hemisphere. All these changes result in changing geometry of the solar position in the sky with respect to time of year and specific location. Similarly, the resulting yearly variation in the solar input creates seasonal variations in climate and weather at each location. The solar position in the sky corresponds to topocentric angles, as follows:

- The solar elevation angle is defined as the angle formed by the direction of the sun and the local horizon. It is the complement of SZA, i.e., 90°–SZA.

- The solar azimuth angle is defined as the angle formed by the projection of the direction of the sun on the horizontal plane defined eastward from true north, following the International Organization for Standardization (ISO) 19115 standard. For example, 0° or360° = due north, 90° = due east, 180° = due south, and 270° = due west.

An example of apparent sun path variations for various periods of the year is depicted in Figure 1-5. Because of their significance in performing any analysis of solar radiation data or any radiation model calculation, the use of solar position calculations of sufficient accuracy is necessary, such as those derived from NREL’s Solar Position Algorithm4 (Reda and Andreas2003, 2004). This algorithm predicts solar zenith and azimuth angles as well as other related parameters such as the Sun-Earth distance and the solar declination. All this is possible in the period from 2000 B.C. to 6000 A.D. with an SZA standard deviation of only ≈0.0003° (1”). To achieve such accuracy during a long period, this algorithm is very time consuming, with approximately 2300 floating operations and more than 300 direct and inverse trigonometric functions at each time step. Other algorithms exist, differing in the attained accuracy and in their period of validity. Various strategies exist to reduce operations, such as reducing the period of validity while maintaining high accuracy (Blanc and Wald 2012; Grena 2008; Blanco-Muriel etal. 2001) or keeping a long period while reducing the accuracy (Michalsky 1988).

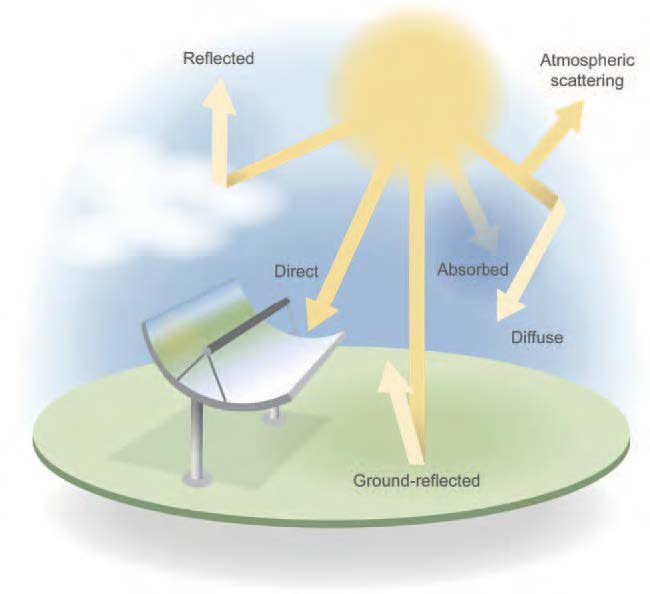

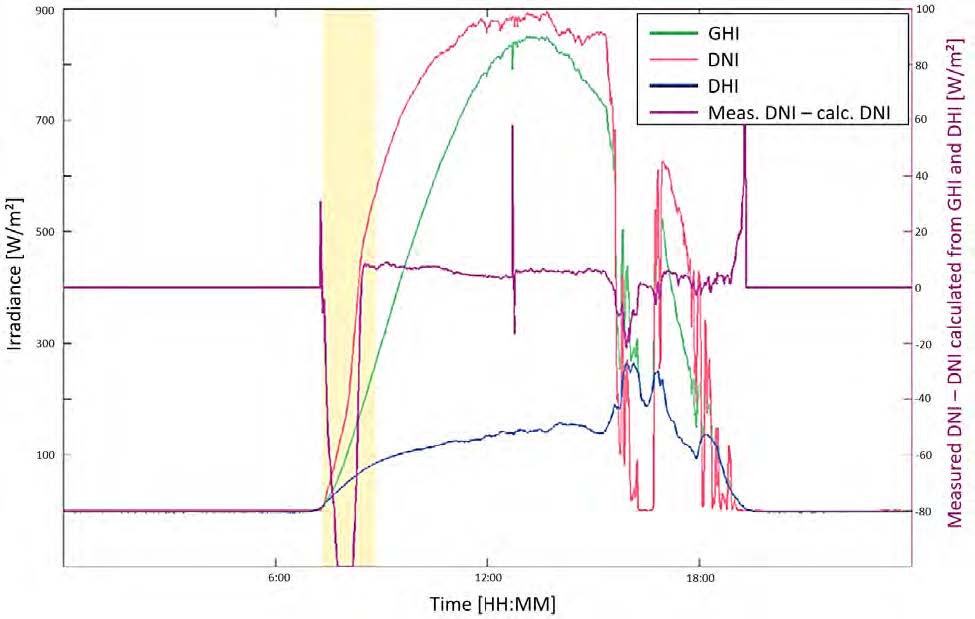

1.7 Solar Resource and Components Radiation can be transmitted, absorbed, or scattered in varying amounts by an attenuating medium, depending on wavelength. Complex interactions of the Earth’s atmosphere with solar radiation result in three fundamental broadband components of interest to solar energy conversion technologies:

- Direct normal irradiance (DNI): solar (beam) radiation from the sun’s disk itself—of interest to concentrating solar technologies (CST), tracked collectors, and other solar technologies because of incidence angle dependent efficiency

- Diffuse horizontal irradiance (DHI): scattered solar radiation from the sky dome(excluding the sun and thus DNI)5

- Global horizontal irradiance (GHI): geometric sum of the direct and diffuse horizontalcomponents (also called the total hemispheric irradiance)

- Global tilted irradiance (GTI): geometric sum of the direct, sky diffuse, and ground-reflected components on a tilted surface. GTI is also referred to as the plane-of-array(POA) irradiance in the photovoltaic (PV) literature.

- Global normal irradiance (GNI): geometric sum of the direct, sky diffuse, and ground-reflected components on a tracking surface that remains perpendicular to the sunbeam.

The radiation components are shown in Figure 1.6.

These basic solar components are related to SZA by the fundamental expression:

![]()

![]()

where AI is the angle of incidence of the solar beam onto the tilted surface, SVF is the sky view factor between the collector and the visible part of the sky, GVF is the ground view factor between the collector and the visible part of the foreground surface, and RHI is the global reflected horizontal irradiance.

1.7.1 Direct Normal Irradiance and Circumsolar Irradiance

By definition, DNI is the irradiance on a surface perpendicular to the vector (i.e., normal incidence) from the observer to the center of the sun caused by radiation that was not scattered by the atmosphere out of the region appearing as the solar disk (WMO 2018). This strict definition is useful for atmospheric physics and radiative transfer models, but it results in a complication for ground observations: it is not possible to measure whether or not a photon was scattered if it reaches the observer from the direction in which the solar disk is seen. Therefore, DNI is usually interpreted in a less stringent way in the world of solar energy. Direct solar radiation is understood as the “radiation received from a small solid angle centered on the sun’s disk” (ISO 2018). The size of this “small solid angle” for DNI measurements is recommended to be 5 ∙ 10-3 sr (corresponding to ≈2.5° half angle) (WMO 2018). This recommendation is approximately 10 times larger than the angular radius of the solar disk itself based on no-atmosphere geometry, whose yearly average is 0.266°, as mentioned earlier. This relaxed definition is necessary for practical reasons because the instruments used for DNI measurement (pyrheliometers) need to track or follow the sun throughout its path of motion in the sky, and small tracking errors are to be expected. The relatively large field of view (FOV) of pyrheliometers reduces the effect of such tracking errors. Similarly, DHI must be obtained by masking the sun from the pyranometer detector with a small shade. An FOV with a radius of 2.5° is necessary to avoid the impact of tracking errors (e.g., wind-induced tracking errors) and to maintain an FOV complementary to that of the pyrheliometer.

To understand the definition of DNI and how it is measured by pyrheliometers in practice, the role of circumsolar radiation—scattered radiation coming from the annulus surrounding the solar disk—must be discussed. (The reader is referred to the detailed review, based on both experimental and modeling results, found in Blanc et al. (2014).) Because of forward scattering of direct sunlight in the atmosphere, the circumsolar region closely surrounding the solar disk (solar aureole) looks very bright and can alter the observed sunshape (Buie et al. 2003). The sunshape—a quantity not to be confused with the “shape of the Sun”—is the azimuthally averaged radiance profile as a function of the angular distance from the center of the sun normalized to 1 at the apparent sun’s disc center. The radiation coming from this region is called the circumsolar radiation. For the typical FOV of modern pyrheliometers (2.5°), circumsolar radiation contributes a variable amount, depending on atmospheric conditions, to the DNI measurement. Determining the magnitude of the circumsolar radiation is of interest in CST applications because DNI measurements are typically larger than the beam irradiance that can be used in concentrating systems. This causes an overestimate of CST plant production because the FOV of the concentrators (typically of the order of 1° or even less) is much smaller than the FOV of the pyrheliometers that are used on-site to determine the incident DNI.

The circumsolar contribution to the observed DNI can be quantified if the radiance distribution within the circumsolar region and the so-called penumbra function of the pyrheliometer are known. The latter is a characteristic of the instrument and can be derived from the manufacturer’s data. The former, however, is difficult to determine experimentally with current instrumentation. For instance, a method based on two commercial instruments (a sun and aureole measurement system and a sun photometer) has been presented (Gueymard 2010; Wilbert et al. 2013). Other instruments that can measure the circumsolar irradiance are documented in Wilbert et al. (2012, 2018), Kalapatapu et al. (2012), and Wilbert (2014).

To avoid additional measurements, substantial modeling effort is required and might involve estimation of the spectral distribution (Gueymard 2001). Some specific input data are rarely accessible in real time, particularly when a thin ice cloud (cirrus) reduces DNI but considerably increases the circumsolar contribution. Despite these difficulties and because of the special needs of the solar industry, new specialized radiative models have been developed recently to evaluate the difference between the true and apparent DNI using various types of observations (Eissa et al. 2018; Räisänen and Lindfors 2019; Sun et al. 2020; Xie et al. 2020). More research is being conducted to facilitate the determination of the circumsolar fraction at any location and any instant as part of solar resource assessments.

1.7.2 Diffuse Irradiance

A cloudless atmosphere absorbs and scatters some radiation out of the direct beam before it reaches the Earth’s surface. Scattering occurs in essentially all directions, away from the specific path of the incident beam radiation. This scattered radiation constitutes the sky diffuse radiation in the hemisphere above the surface. In particular, the Rayleigh scattering theory explains why the sky appears blue (short wavelengths, in the blue and violet parts of the spectrum, are scattered more efficiently by atmospheric molecules) and why the sun’s disk appears yellow-red at sunrise and sunset (blue wavelengths are mostly scattered out of the direct beam, whereas the longer red wavelengths undergo less scattering, resulting in a red shift). As mentioned above, the sky radiation in the hemisphere above the local surface is referred to as DHI. A more technical and practical definition of DHI is that it represents all radiation from the sky dome except what is considered DNI; hence, in practice, DHI is the total diffuse irradiance from the whole-sky hemisphere minus the 2.5° annulus around the sun center.

DHI includes radiation scattered by molecules (Rayleigh effect), aerosols (Mie effect), and clouds (if present). It also includes the backscattered radiation that is first reflected by the surface and then re-reflected downward by the atmosphere or clouds. The impact of clouds is difficult to model because they have optical properties that can vary rapidly over time and can also vary considerably over the sky hemisphere. Whereas a single and homogenous cloud layer can be modeled with good accuracy, complex three-dimensional cloud scenes present extreme challenges (Hogan and Shonk 2012).

1.7.3 Global Irradiance

The total hemispherical solar radiation on a horizontal surface, or GHI, is the sum of DHI and the projected DNI to the horizontal surface, as expressed by Eq. 1-2. This fundamental equation is used for data quality assessments, some solar radiation measurement system designs, and atmospheric radiative transfer models addressing the needs for solar resource data. Because GHI is easier—and less expensive—to measure than DNI or DHI, most radiometric stations in the world provide only GHI data. It is then necessary to estimate DNI and DHI by using an appropriate separation model, as discussed in the next section.

1.7.4 Solar Resources for Solar Energy Conversion

Obtaining data time series or temporal averages of the solar radiation components—most importantly, GHI and DNI—that relate to a conversion system is the first step in selecting the site-appropriate technology and evaluating the simulated performance of specific system designs. Systems with highly concentrating optics rely solely on DNI. Low-concentration systems might also be able to use some sky diffuse radiation. Flat-plate collectors, fixed or tracking, can use all downwelling radiation components as well as radiation reflected from the ground if in the collector’s FOV.

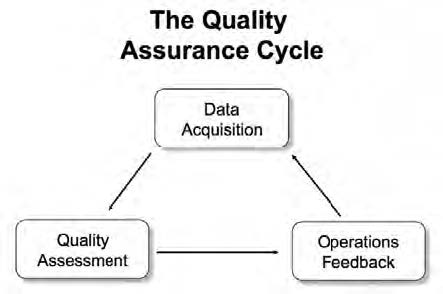

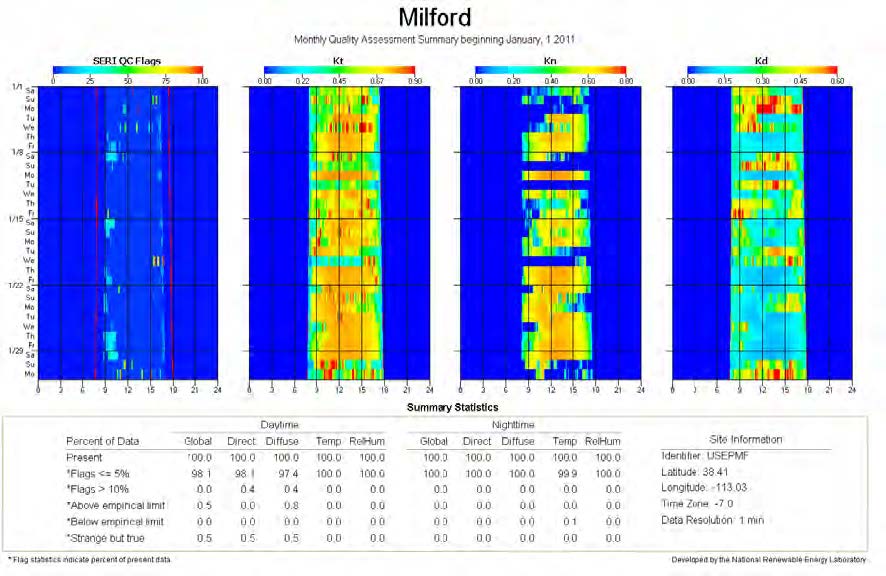

Solar radiation data are required at all stages of a solar project. Before construction, long time series of historical data are necessary to quantify the solar resource and its variability. During operation, real-time data are typically necessary to verify the performance of the system and to detect problems. In both cases, the required data can be obtained from measurements, modeling, or a combination of both. Typically, measurements are not used exclusively for the early stages of development because (1) long time series of measured irradiance data generally do not exist at the location of interest; (2) measured data, when available, most likely contain gaps that must be filled by modeling; and (3) conducting quality measurements is considerably more costly than operating models (assuming, of course, that the otherwise prohibitively high costs of satellite operations and data management are borne by other agencies). High-quality measurements remain essential, however, because their uncertainty is normally significantly less than that of modeled data , and thus they can serve to validate models and even improve the quality of long-term modeled time series through a “site adaptation” process. The development and validation of solar radiation models is an intricate procedure that requires irradiance observations obtained with very low measurement uncertainty, typically obtained only at research-class stations.

GHI is measured at a relatively large number of stations in the world; however, the quality of such data remains to be verified at most of these stations. Assuming that good-quality GHI data are available at a station of interest, how can the analyst derive the two other components—DNI and DHI—for example, to compute global irradiance on a tilted plane?

There are two possible solutions to this frequent situation. The first is to temporarily ignore the existing GHI data and obtain time series of GHI, DNI, and DHI from a reputable source of satellite-derived data. The modeled and measured GHI data can then be compared for quality assurance and possible bias corrections to the modeled data or, conversely, to determine the quality of the measured data. Both measured and modeled GHI values can incorporate systematic biases. Understanding the magnitude and nature of these biases and how they can affect the calculation is important when determining the uncertainty in the results.

The second method for determining DNI and DHI from GHI data consists of using one of numerous “separation” or “decomposition” models, about which considerable literature exists. Gueymard and Ruiz-Arias (2016) reviewed 140 such models and quantified their performance at 54 high-quality radiometric stations over all continents using data with high temporal resolution (1 minute, in most cases). Previous evaluations had targeted a limited number of models, exclusively using the more conventional hourly resolution—e.g., Ineichen (2008); Jacovides et al. (2010); Perez et al. (1990); and Ruiz-Arias et al. (2010). All current models of this type being empirical in nature are not of “universal” validity and thus might not be optimized for the specific location under scrutiny, particularly under adverse situations (e.g., subhourly data, high surface albedo, or high aerosol loads) that can trigger significant biases and random errors; hence, the most appropriate way to deal with the component separation problem cannot be ascertained at any given location. The solar radiation scientific research community continues to validate the existing conversion algorithms and to develop new ones.

In general, the higher the time resolution, the larger random errors in the estimated DNI or DHI will be. Even large biases could appear at subhourly resolutions if the models used are not appropriate for short-interval data. This issue is discussed by Gueymard and Ruiz-Arias (2014, 2016), who showed that not all hourly models are appropriate for higher temporal resolutions and that large errors might occur under cloud-enhancement situations. A new avenue of research is to optimally combine the estimates from multiple models using advanced artificial intelligence techniques (Aler et al. 2017).

1.7.5 Terrestrial Solar Spectra

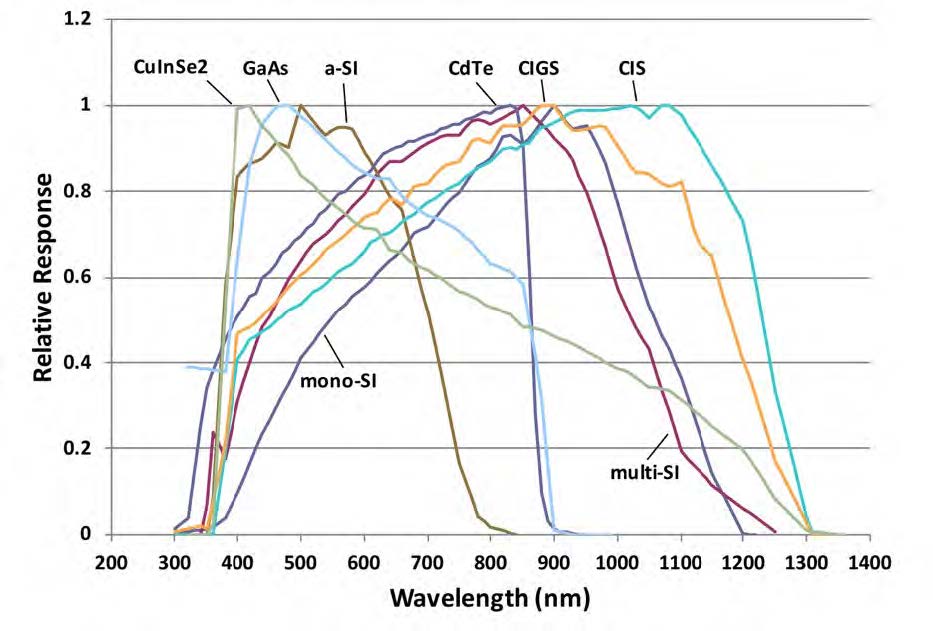

Many solar energy applications rely on collectors or systems that have a pronounced spectral response. The performance of solar cells that constitute the building blocks of PV systems are affected by the spectral distribution of incident radiation. Each solar cell technology has a specific spectral dependence (see Figure 2-22). To allow for the comparison and rating of solar cells or modules, it is thus necessary to rely on reference spectral conditions. To this end, various international standardization bodies—ASTM, the International Electrotechnical Commission

(IEC), and ISO—have promulgated standards that describe such reference terrestrial spectra. In turn, these spectra are mandated to test the performance of any solar cell using either indoor or outdoor testing methods. Currently, all terrestrial standard reference spectra are for an air mass of 1.5 (noted AM1.5). The reason for this as well as historical perspectives on the evolution of these standards are discussed by Gueymard et al. (2002). The standard reference spectra of relevance to the solar energy community are the following:

- ASTM G173: for GTI on a 37° tilted surface and DNI

- ASTM G197: for the direct, diffuse, and global components incident on surfaces tilted at 20° and 90°

- IEC 60904-3: similar to ASTM G173, with only slightly different values, lower by 0.29%

- ISO 9845-1: replicating ASTM G159 (now deprecated and replaced by G173); ISO is currently preparing an update.

In addition, CIE 241:2020 proposes a number of recommended reference solar spectra for industrial applications at various air masses, and ASTM G177 defines a “high-UV” spectrum at an air mass of 1.05 for material degradation purposes.

It is emphasized that these reference spectra correspond to clear-sky situations and are difficult to realize experimentally (Gueymard 2019). Spectroradiometers are now available that measure the spectral irradiance at high temporal resolution (e.g., each minute) under all possible sky conditions. Although the availability of spectral data are limited, they can be used to test systems under field conditions.

Measuring Solar Radiation

Accurate measurements of the incoming irradiance are essential to solar power plant project design, implementation, and operations. Because solar irradiance measurements are relatively complex—and therefore expensive—compared to other meteorological measurements, they are available for only a limited number of locations. This is true especially for direct normal irradiance (DNI). Developers use irradiance data for:

- Site resource analysis

- System design

- Plant operation.

Irradiance measurements are also essential for:

- Developing and testing models that use remote satellite sensing techniques or available surface meteorological observations to estimate solar radiation resources

- Site adaptation of long-term resource data sets

- Developing solar resource forecasting techniques and enhancing their quality by applying recent measurements for the creation of the forecast

- Other disciplines not directly related to renewable energy, such as climate studies and accelerated weathering tests.

This chapter focuses on the instrument selection, characterization, installation, design, and operation and maintenance (O&M)—including calibration of measurement systems suitable for collecting irradiance resource measurements—for renewable energy technology applications.

2.1 Instrumentation

Before considering instrumentation options and the associated costs, the user should first evaluate the data accuracy or uncertainty levels that will satisfy the ultimate analyses based on the radiometric measurements. This ensures that the best value can be achieved after considering the various available measurement and instrumentation options.

By first establishing the project needs for solar resource data accuracy, the user can then base the instrument selection and the associated levels of effort necessary to operate and maintain the measurement system on an overall cost-performance determination. Specifically, the most accurate instrumentation should not be purchased if the project resources cannot support the maintenance required to ensure measurement quality that is consistent with the radiometer design specifications and the manufacturer’s recommendations. In such cases, alternative instrumentation designed for reduced maintenance requirements and reduced measurement performance—such as radiometers with photodiode-based detectors and diffuser disks or integrated measurement systems such as rotating shadowband irradiometers (RSIs)—could produce more consistent results (see Section 2.2.5). As stated, however, in this context the first consideration is the accuracy required to support the final analysis. If budget limitations cannot sustain the necessary accuracy, a reevaluation of the project goals and resources must be undertaken.

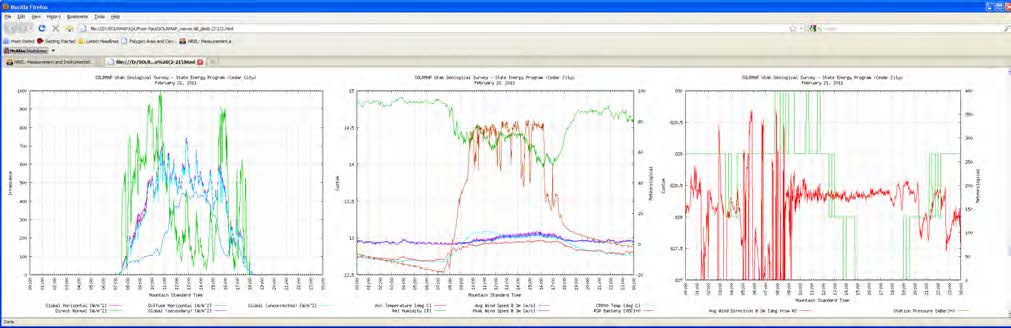

Redundant instrumentation is another important approach to ensure confidence in data quality. Multiple radiometers at the project site and/or providing for the measurement of the solar irradiance components—e.g., global horizontal irradiance (GHI), diffuse horizontal irradiance (DHI), DNI, and global tilted irradiance (GTI, also referred to as plane-of-array (POA) irradiance)—regardless of the primary measurement need, can greatly enhance opportunities for post-measurement data quality assessment, which is required to provide confidence in the resource data.

Measuring other meteorological parameters relevant to the amounts and types of solar irradiance available at a specific time and location can also provide opportunities for post-measurement data quality assessment (see Section 2.3).

2.2 Radiometer Types

Instruments designed to measure any form of radiation are called radiometers. The earliest developments of instrumentation for measuring solar radiation were designed to meet the needs of agriculture for bright sunshine duration to understand evaporation and by physicists to determine the sun’s output or “solar constant.” During the 19th and 20th centuries, the most widely deployed instrument for measuring solar radiation was the Campbell-Stokes sunshine recorder (Iqbal 1983; Vignola, Michalsky, and Stoffel 2020). This analog device focuses the direct beam by a simple spherical lens (glass ball) to create burn marks during clear periods (when DNI exceeds ≈120 W/m2) on a sensitized paper strip placed daily in the sphere’s focus curve. By comparing the total burn length to the corresponding day length, records of percentage possible sunshine from stations around the world became the basis for characterizing the global distribution of solar radiation (Löf, Duffie, and Smith 1966). The earliest pyrheliometers (from the Greek words for fire, sun, and measure) were based on calorimetry and used by scientists to measure brief periods of DNI from various locations, generally at high elevations to minimize the effects of a thick atmosphere on the transmission of radiation from the sun. By the early 20th century, scientists had developed pyranometers (from the Greek words for fire and measure) to measure GHI to understand the Earth’s energy budget (Vignola, Michalsky, and Stoffel 2020).

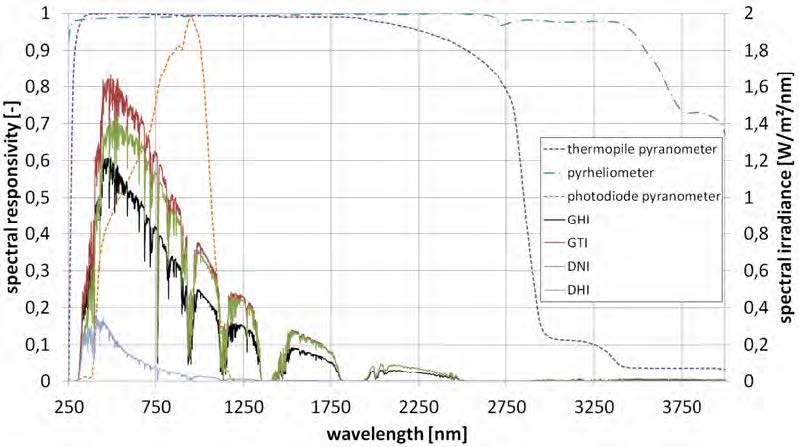

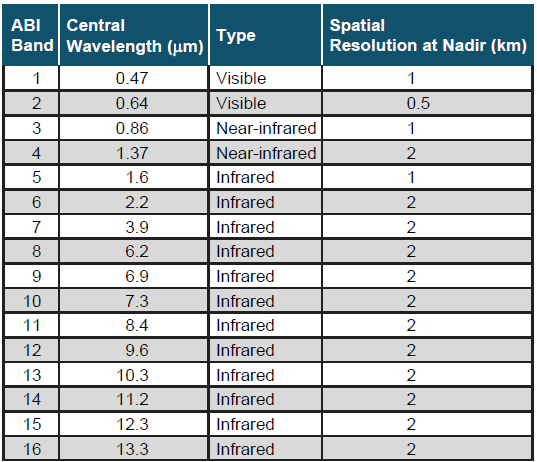

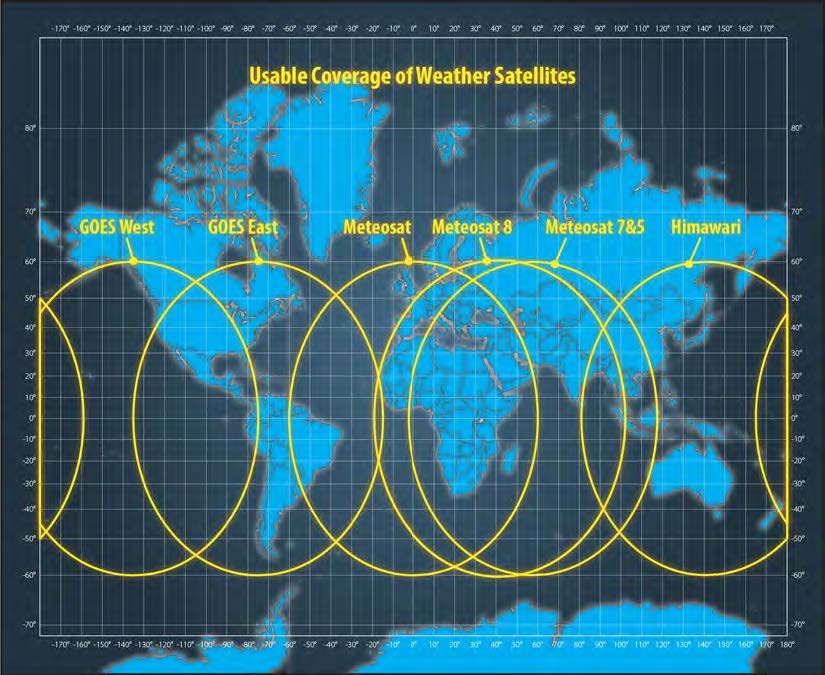

This section summarizes the types of commercially available radiometers most commonly used to measure solar radiation resources for solar energy technology applications. Solar resource assessments are traditionally based on broadband measurements—i.e., encompassing the whole shortwave spectrum (0.29–4 µm). More specialized instruments (spectroradiometers) are needed to evaluate the spectral distribution of this irradiance, which in turn is useful to investigate the spectral response of photovoltaic (PV) cells, for instance.

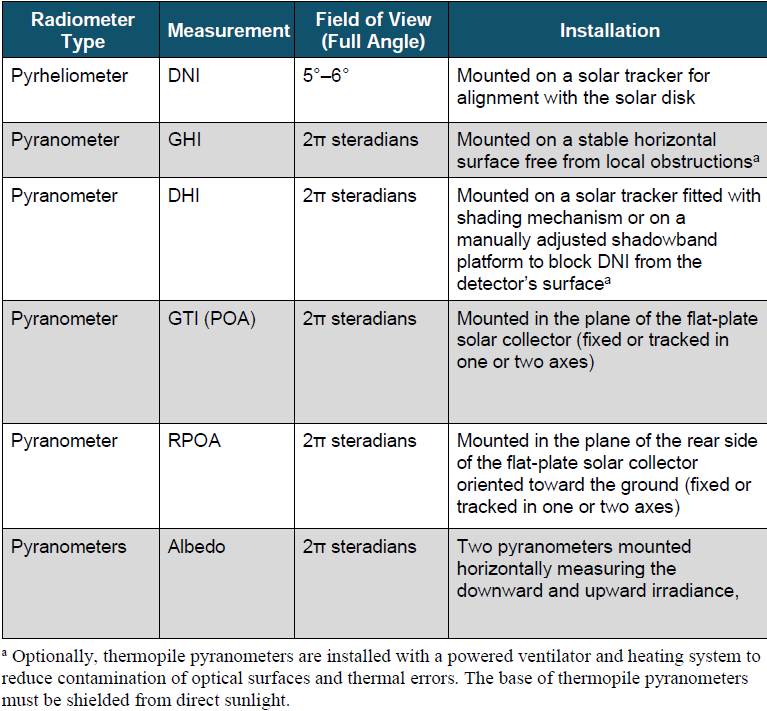

2.2.1 Pyrheliometers and Pyranometers

Pyrheliometers and pyranometers are two types of radiometers used to measure solar irradiance. Their ability to receive solar radiation from two distinct portions of the sky distinguishes their designs. As described earlier, pyrheliometers are used to measure DNI, and pyranometers are used to measure GHI, DHI, GTI (aka POA), or the in-plane rear-side irradiance (RPOA). Another important measurement involving pyranometers is the albedo, which can be used to estimate RHI (reflected horizontal irradiance) in Eq. (1-2b) as well as RPOA. Table 2-1 summarizes some key attributes of these two radiometers.

Table 2-1. Overview of Solar Radiometer Types and Their Applications

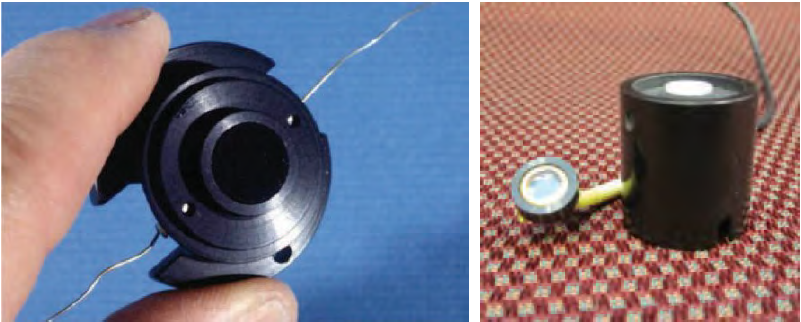

Pyrheliometers and pyranometers commonly use either a thermoelectric or photoelectric passive sensor to convert solar irradiance (W/m2) into a proportional electrical signal (microvolts [µV] DC). Thermoelectric sensors have an optically black coating that allows for a broad and uniform spectral response to all solar radiation wavelengths from approximately 300–3000 nm (Figure 2-1, left). The most common thermoelectric sensor used in radiometers is the thermopile. There are all-black thermopile sensors used in pyrheliometers and pyranometers as well as black-and-white thermopile pyranometers. In all-black thermopile sensors, the surface exposed to solar radiation is completely covered by the absorbing black coating. The absorbed radiation creates a temperature difference between the black side of the thermopile (i.e., “hot junction”) and the other side (i.e., “reference” or “cold junction”). The temperature difference causes a voltage signal. In black-and-white thermopiles, the surface exposed to radiation is partly black and partly white. In this case, the temperature difference between the black and the white surfaces creates the voltage signal. Despite having a relatively small thermal mass, their 95% response times are not negligible, and they are typically 1–30 seconds6—that is, the output signal lags the changes in solar flux. Some instruments include a signal post-processing that tries to compensate for this time lag. Recently, new smaller thermopile sensors with response times as low as 0.2 second have been made commercially available as well. A detailed analysis of radiometer response times is found in Driesse (2018).

In contrast to thermopiles, common photoelectric sensors generally respond to only the visible and near-infrared spectral regions from approximately 350–1,100 nm (Figure 2-1, right; Figure 2-2). Pyranometers with photoelectric sensors are sometimes called silicon (Si) pyranometers or photodiode pyranometers. These sensors have very fast time-response characteristics—on the order of microseconds.

For both thermopile and photelectric detectors used in commercially available instruments, the electrical signal generated by exposure to solar irradiance levels of approximately 1000 W/m2 is on the order of 10 mV DC (assuming no amplification of the output signal and an appropriate shunt resistor for photodiode sensors). This rather low-level signal requires proper electrical grounding and shielding considerations during installation (see Section 2.3.4). Most manufacturers now also offer pyrheliometers and pyranometers with built-in amplifiers and/or digital outputs. Such digital instruments can be of advantage for several reasons. Corrections for systematic errors depending on, e.g., the sensor temperature or the incident angle of the sun can be corrected directly in the instrument, which reduces the effort needed for data treatment and avoids user errors. Their implementation in a data acquisition system can be easier, and errors resulting from the transmission of low-voltage signals might be avoided. On the other hand, such digital sensors are sensitive to transients, surges, and ground potential rise, so the isolation and surge protection of power and communications lines is of high importance (Section 2.3.4).

G-173 conditions at AM1.5. Image by DLR

2.2.1.1 Pyrheliometers

Pyrheliometers are typically mounted on automatic solar trackers to maintain the instrument’s alignment with the solar disk and to fully illuminate the detector from sunrise to sunset (Figure 2-3 and Figure 2-4). Alignment of the pyrheliometer with the solar disk is determined by a simple diopter—a sighting device in which a small spot of light (the solar image) falls on a mark in the center of a target located near the rear of the instrument, serving as a proxy for alignment of the solar beam to the detector. The tracking error is acceptable as long as the solar image is at least tangent to the diopter target. Modern sun trackers use software to compute and precisely track the sun position. These calculations require that the sun tracker is assembled and positioned correctly (horizontally levelled, correct azimuth orientation), and tracking errors occur if the tracker is not installed and positioned correctly. Tracking errors caused by imperfect levelling vary with the sun position. Sun sensors can help to reduce the remaining tracking errors during periods with no direct irradiance; hence, they are used in high-quality stations. The sun sensor is tracked to the sun and uses a four-quadrant sensor placed behind a pinhole or a lens to detect the tracking error. The tracking error is then sent to the tracker software so that it can be corrected. By convention—and to allow for small variations in tracker alignment—view-limiting apertures inside a pyrheliometer allow for the detection of radiation in a narrow annulus of sky around the sun (WMO 2018), called the circumsolar region. This circumsolar radiation component is the result of forward scattering of radiation near the solar disk, itself caused by cloud particles, atmospheric aerosols, and other constituents that can scatter solar radiation. All modern pyrheliometers should have a 5° field of view (FOV), following the World Meteorological Organization (WMO) (2018) recommendations. The FOV of older instruments could be larger, however, such as 5.7°–10° full angle. Depending on the FOV—or, to be more precise, the sensor’s penumbra function and tracker alignment, pyrheliometer measurements include varying amounts of circumsolar irradiance contributions to DNI. Although this is usually a very small contribution to the measurement, under atmospheric conditions of high scattering, it can be measurable, or even significant.

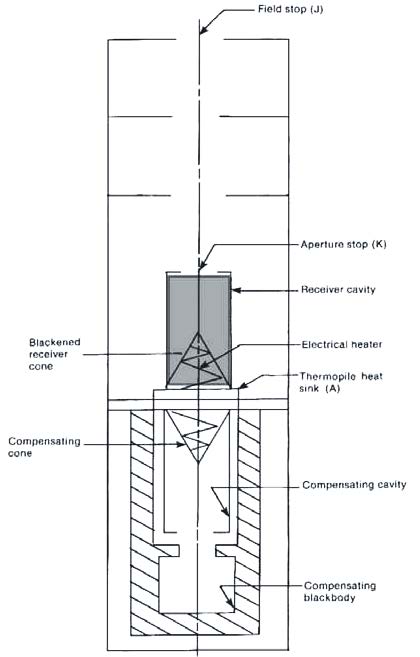

The most accurate measurements of DNI under stable conditions are accomplished using an electrically self-calibrating absolute cavity radiometer (ACR; see Figure 2-5). This advanced type of radiometer is the basis for the World Radiometric Reference (WRR), the internationally recognized detector-based measurement standard for DNI (Fröhlich 1991). The WMO World Standard Group of ACRs is shown in Figure 2-6. By design, ACRs have no windows and are therefore generally limited to fully attended operation during dry conditions to protect the integrity of the receiver cavity (Figure 2-7). Removable windows and temperature-controlled all-weather designs are available for automated continuous operation of these radiometers; however, the installation of a protective window nullifies the “absolute” nature of the DNI measurement. The window introduces additional measurement uncertainties associated with the optical transmittance properties of the window (made from either quartz or calcium fluoride) and the changes to the internal heat exchange resulting from the sealed system. Moreover, ACRs need some periods of self-calibration during which no exploitable measurement is possible. This creates discontinuities in the high-accuracy DNI time series that could be measured with windowed ACRs, unless a regular pyrheliometer is also present to provide the necessary redundancy (Gueymard and Ruiz-Arias 2015). Combined with their very high cost of ownership and operation, this explains why ACRs are rarely used to measure DNI in the field.

A unique 10-month comparison of outdoor measurements from 33 pyrheliometers, including ACRs, under a wide range of weather conditions in Golden, Colorado, indicated that the estimated measurement uncertainties at a 95% confidence interval ranged from ±0.5% for windowed ACRs to +1.4%/–1.2% for commercially available instruments (Michalsky et al. 2011). The results also suggested that the measurement performance during the comparison was better than indicated by the manufacturers’ specifications.

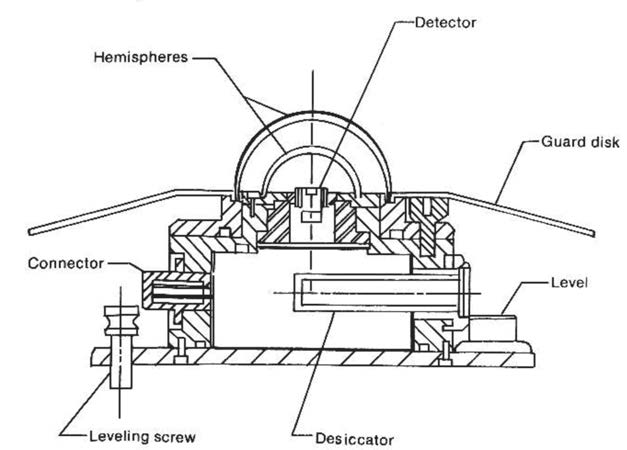

2.2.1.2 Pyranometers

A pyranometer has a thermoelectric or photoelectric detector with a hemispherical FOV (360° or 2π steradians) (see Figure 2-4 and Figure 2-8). This type of radiometer is mounted horizontally to measure GHI. In this horizontal mount, the pyranometer has a complete view of the sky dome.

Ideally, the mounting location for this instrument is free of natural or artificial obstructions on the horizon. Alternatively, the pyranometer can be mounted at a tilt to measure GTI, e.g., in the case of latitude-tilt or 1-axis tracking systems, or vertically for building applications. In an upside-down position, it measures the ground-reflected irradiance. The local albedo is simply obtained by dividing the latter by GHI.

The pyranometer detector is mounted under a protective dome (made of precision quartz or other high-transmittance optical material) and/or a diffuser. Both designs protect the detector from the weather and provide optical properties consistent with receiving hemispheric solar radiation. Pyranometers can be fitted with ventilators that constantly blow air—sometimes heated—from under the instrument and over the dome (Figure 2-9). The ventilation reduces the potential for contaminating the pyranometer optics caused by dust, dew, frost, snow, ice, insects, or other materials. Ventilation and heating also affect the thermal offset characteristics of pyranometers with single all-black detectors (Vignola, Long, and Reda 2009). The ventilation devices can require a significant amount of electrical power (5–20 W), particularly when heated, adding to the required capacity for on-site power generation in remote areas. Both DC and AC ventilators exist, but current research indicates that DC ventilators are preferable (Michalsky, Kutchenreiter, and Long 2019).

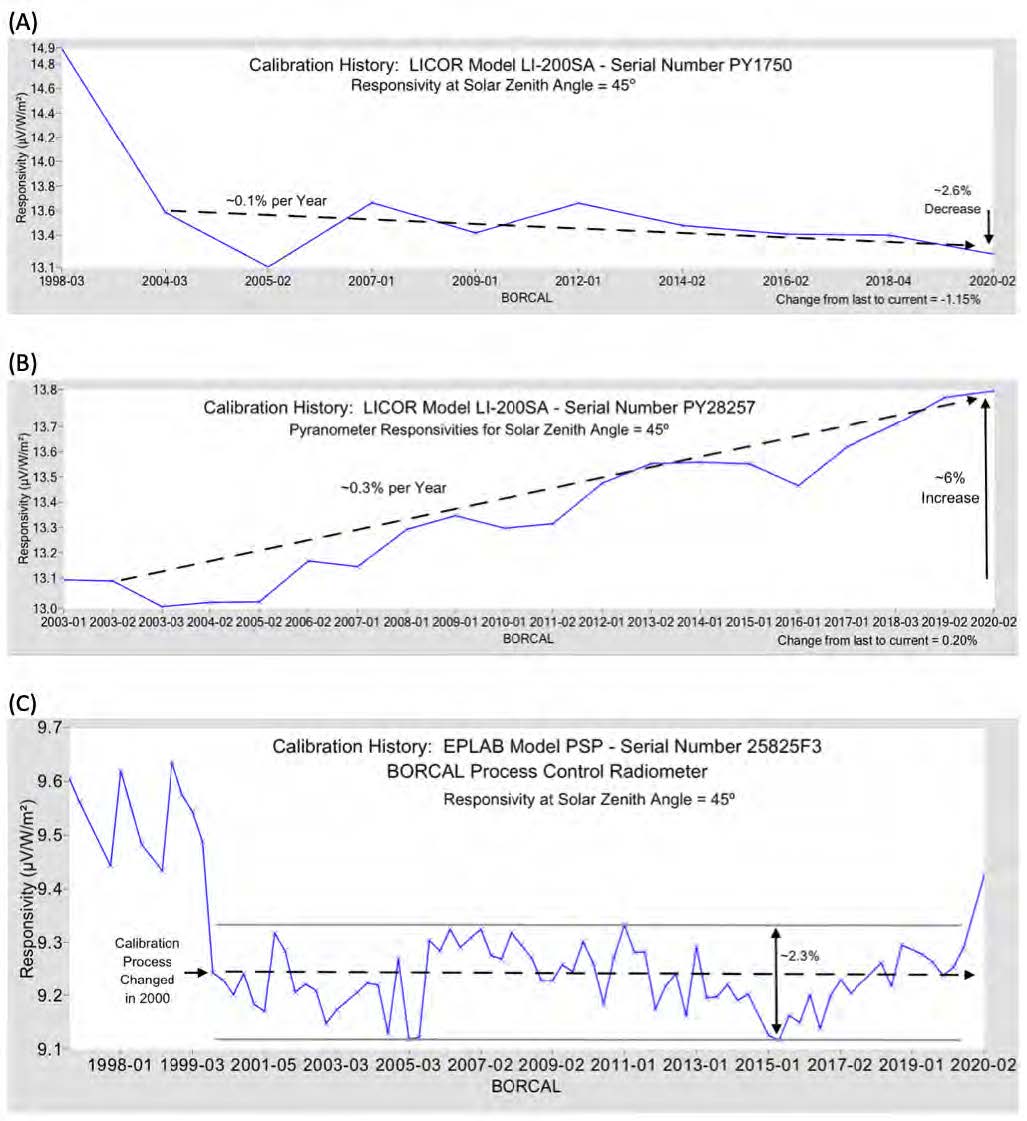

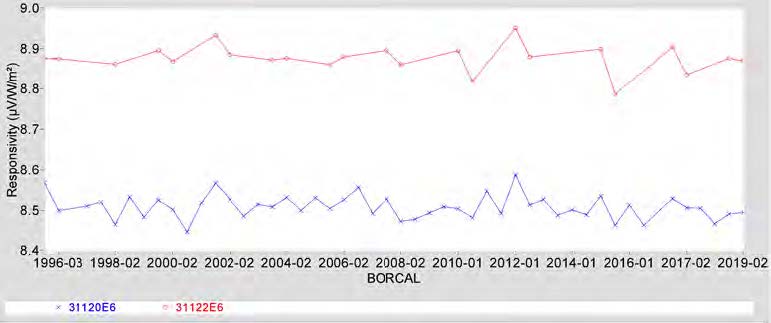

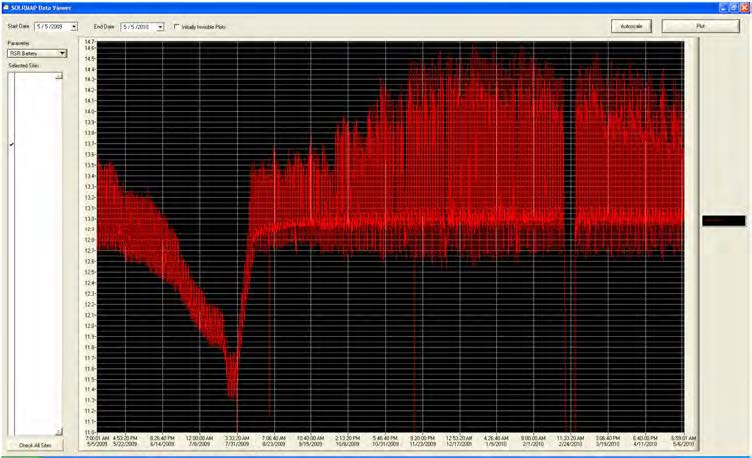

Photodiode pyranometers provide the signal in the form of a photodiode’s short-circuit current. The fast response of such photodiode pyranometers makes them interesting for some applications, e.g., the measurement of cloud enhancement or ramping events. Photodiode pyranometers employ a diffuser above the detector (Figure 2-10) to achieve an approximate hemispherical response and to omit the glass dome to reduce cost. The application of a diffuser as an external surface compared to transparent glass domes makes such pyranometers measurably more dust tolerant than pyranometers with optical glass domes (Maxwell et al. 1999). The long-term stability of photodiode pyranometers can vary differently from thermopile-based pyranometers, as shown in Figure 2-11 and as further analyzed in Geuder et al. (2014). These instrument-specific behaviors dictate the need for regular calibrations as recommended by the manufacturers.

Pyranometers can also be used to measure the diffuse irradiance. The required device for this measurement is known as a diffusometer. It consists of a pyranometer and a shading structure that blocks the direct radiation on its way to the sensor. Shading balls, shading disks, shading rings, or shadowbands are used for that purpose. Shading balls and shading disks must track the sun, and they cover only a small part of the sky corresponding to the angular region defined for measuring DNI (normally 5°). Shading rings and shadowbands cover the complete solar path during a day as seen from the pyranometer. They are built a little bit wider to cover the sun’s path on several consecutive days so that readjustments of the shading ring position are not required every day. The shading rings and shadowbands block a significant part of sky diffuse radiation; therefore, correction functions are necessary to determine DHI from the shading device. This explains why the accuracy of such a DHI determination is less than that of a DHI measurement with a shading disk or a shading ball. Shadowbands are further described in Section 2.2.5 in connection with the RSIs.

2.2.2 Pyrheliometer and Pyranometer Classifications

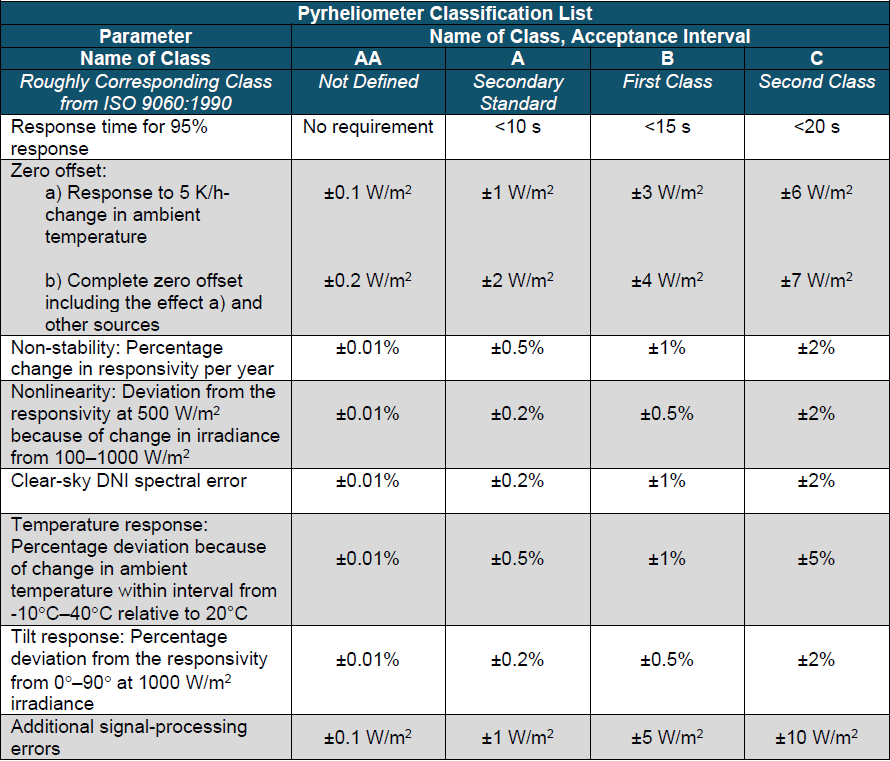

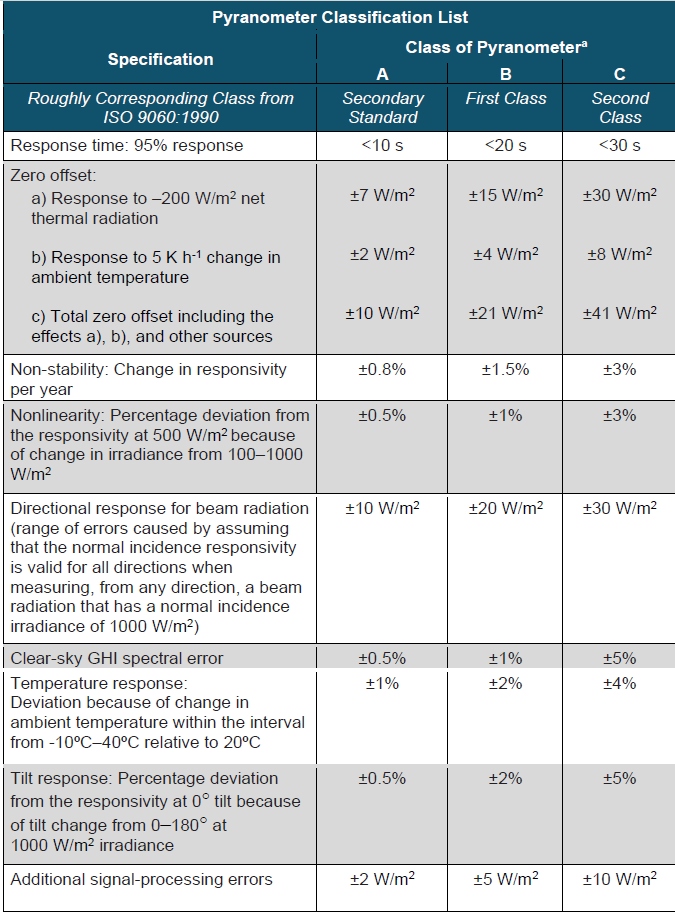

Both the International Organization for Standardization (ISO) and WMO have established instrument classifications and specifications for the measurement of solar irradiance. Radiometer classification can help to find the correct instrument and to interpret the data. Several instrument properties are used as the basis for these pyrheliometer and pyranometer classifications. The latest ISO specifications for these radiometers are found in ISO 9060 (ISO 2018) and are summarized in Table 2-2 and Table 2-3 based on Apogee (2019). The standard provides not only acceptance intervals but also corresponding guard bands, which is advantageous because the measurements used to obtain the sensor specifications have nonnegligible uncertainties.

The acceptance intervals provided by ISO 9060 give a general idea of the differences in data quality afforded by instrument classes; therefore, the radiometer classes can be understood as accuracy classes. The current standard also notes, however, that the acceptance intervals shown in the tables cannot be used for uncertainty calculations for measurements obtained at conditions that are different from those defined for the classification. For example, the temperature response limits are defined for the interval from -10°C to 40°C relative to the signal at 20°C. A measurement at 10°C will be connected to a different temperature response error than a measurement at 0°C or even -20°C. For the other parameters, the same principle applies. In particular, the spectral clear-sky irradiance error used for the classification can deviate from the spectral irradiance error for other conditions, e.g., cloudy conditions or other air masses. For pyranometers, it must also be considered that the spectral error for diffuse or tilted radiation is different from the spectral error for global horizontal radiation. A more detailed discussion of the clear-sky spectral error can be found in Wilbert et al. (2020).

The most important changes in the current ISO 9060 compared to the previous version, from 1990 (ISO 1990a), are as follows:

- Simple names are used for the classes (AA, A, B, C), and a new class is introduced mainly for ACRs.

- The clear-sky spectral error is used to classify the spectral properties of the radiometers, allowing photodiode-based radiometers to be also included in the ISO classification. Previously, the spectral selectivity was used, which excluded photodiode radiometers. The spectral selectivity is defined by ISO as the deviation of the spectral responsivity from the average spectral responsivity between 0.35–1.5 µm.

- Additional radiometer classes are defined relatively to their response time and their spectral responsivity. If the 95% response time is less than 0.5 second, the radiometer can be called a “fast response radiometer.” Similarly, “spectrally flat radiometers” are defined using the spectral selectivity. If a radiometer has a spectral selectivity less than 3%, it can be called a spectrally flat radiometer.

- For Class A pyranometers, individual testing of temperature response and directional response is required.

- The final signal of a sensor can be used for classification after the application of specific correction functions (e.g., for temperature response) if these corrections are applied within the measurement system (processor within instrument or control unit). Processing errors are also used as a classification criterion.

Including photodiode radiometers was considered helpful because only fast (µs) photodiode sensors can be used for accurate monitoring of extremely rapid fluctuations of solar irradiance. Under such circumstances—typically caused by cloud enhancement events—side-by-side thermopile and photodiode radiometers can disagree by a significant margin (Gueymard 2017a, 2017b). Because the most accurate way to determine GHI involves the combination of DNI and DHI measurements (ISO 2018; Michalsky et al. 1999), the shading balls, shading disks, shading masks, and rotating shadowbands used in RSIs are also defined in the current ISO 9060.

The WMO characteristics of operational pyrheliometers and pyranometers are presented for three radiometer classifications:

- High quality: near state of the art, suitable for use as a working standard, maintainable only at stations with special facilities and staff

- Good quality: acceptable for network operations

- Moderate quality: suitable for low-cost networks where moderate to low performance is acceptable.

Table 2-2. ISO 9060:2018 Specifications Summary for Pyrheliometers Used to Measure DNI

Table 2-3. ISO 9060:2018(E) Specifications Summary for Pyranometers

The WMO characteristics are similar to the classifications presented in the previous version of ISO 9060. The difference between the WMO and the outdated ISO 9060 classification is in the definition of spectral selectivity. The wavelength range used in the WMO definition is from 300–3000 nm; whereas it was from 350–1500 nm in the 1990 version of ISO 9060. The WMO limits for the selectivity for the different classes were the same or even stricter as in the case of the highest pyranometer class. This led to the unfortunate situation that, apparently, no weatherproof pyrheliometer fulfills the requirements of the WMO classes even though the spectral errors of Class A field pyrheliometers are small. (Clear-sky spectral errors are approximately 0.1%

[Wilbert et al. 2020]). Typical pyranometers of the highest class in ISO 9060 are also excluded from the WMO classification (Wilbert et al. 2020). This is true for both the 1990 and the 2018 versions of the standard; therefore, it is currently not recommended to use the WMO classification but instead to work with the most recent version of ISO 9060.

Even within each instrument class, there can be some measurement uncertainty variations. The user should research various instrument models to gain familiarity with the design and measurement performance characteristics in view of a particular application (Myers and Wilcox 2009; Wilcox and Myers 2008; Gueymard and Myers 2009; Habte et al. 2014). Further, the accuracy of an irradiance measurement depends on the instrument itself as well as on its alignment, maintenance, data logger calibration, appropriate wiring, and other conditions and effects that degrade performance.

2.2.3 Pyrheliometer and Pyranometer Calibrations

As stated, the signal of field radiometers is a voltage or a current that is ideally proportional to the solar irradiance reaching the detector. A calibration factor is required to convert the current or voltage to a solar irradiance. The calibration factor, Ccal, is the inverse of the responsivity, Rs. For example, the responsivity of a thermopile pyrheliometer is given in µV per W/m2. The irradiance, E, can be obtained from the voltage signal, Vpyr, or from the instrument’s responsivity as:

![]()

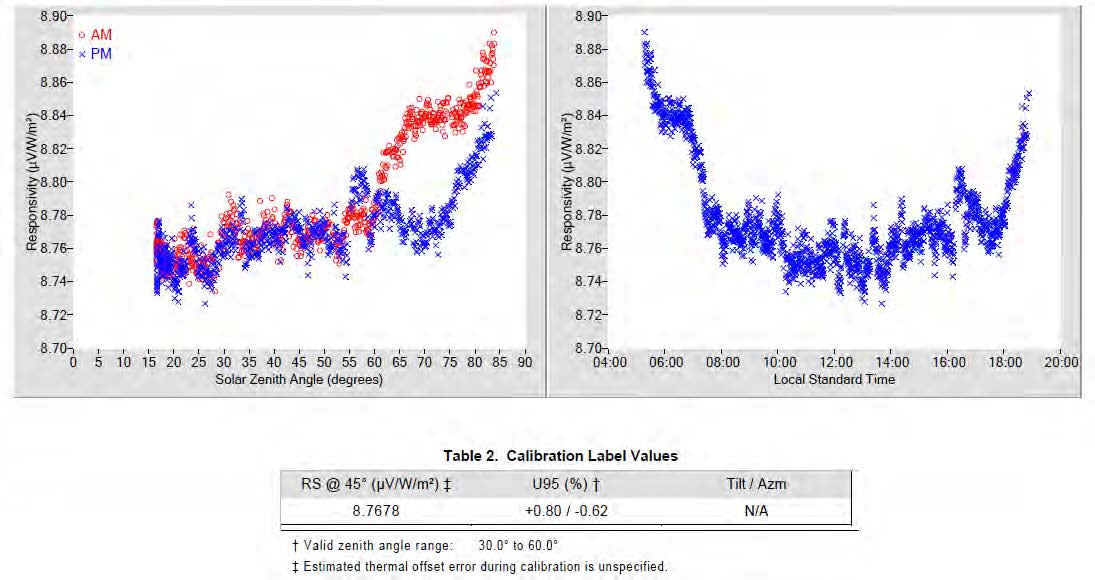

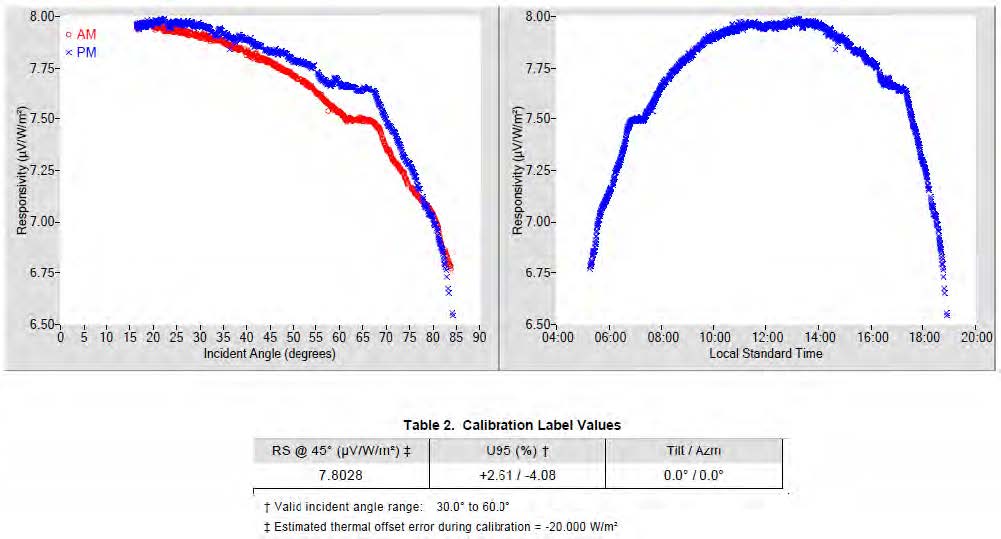

These calibration factors can vary over time, which requires periodic recalibrations, as demonstrated by the time-series plot of calibration responsivities of two pyrheliometers shown in Figure 2-12. The instability can be caused by changes in the instrument, the meteorological conditions at the time of calibration, the stability of the calibration reference radiometer(s), the performance of the data acquisition system, and other factors included in the estimated uncertainty of each calibration result.

The calibration of pyrheliometers and pyranometers is described in detail in international standards ASTM G167-05, ASTM E816-05, ASTM E824-05, ASTM G183-05, ISO 9059, ISO 9846, and ISO 9847. The calibration methods described in ISO 9846 (ISO 1993) for pyranometers and in ISO 9059 (ISO 1990b) for pyrheliometers are based on simultaneous solar irradiance measurements with test and reference instruments. ISO 9847 (ISO 1992) describes pyranometer calibrations using a reference pyranometer. These standards will be revised in the next years by the corresponding ISO working groups.

Pyrheliometers are calibrated following ISO 9059 by comparing the voltage signal of the tracked test pyrheliometer to the reference DNI from one or a group of reference pyrheliometers. For each simultaneous measurement pair, a preliminary responsivity can be calculated as the ratio of the test instrument’s voltage to the reference DNI (Figure 2-13, right). After rejecting outliers and data collected during unstable conditions, an average responsivity can be determined. Because some pyrheliometers show a noticeable correlation with the solar zenith angle (SZA), specific angular responsivities can also be derived (Figure 2-13, left and bottom). For this calibration method, it is important that clouds do not mask the sun or the circumsolar region. The calibration can be affected if significant levels of circumsolar radiation prevail during the calibration. This risk increases with the instrument’s FOV; hence, Linke turbidities should be less than 6 according to the standard method. The Linke turbidity coefficient, TL, is a measure of atmospheric attenuation under cloudless conditions. It represents the number of clean and dry atmospheres that would result in the same attenuation as the real cloudless atmosphere. One method to derive the Linke turbidity from DNI is presented in Ineichen and Perez (2002).

As mentioned, the WRR must be used as the traceable reference for the calibration of all terrestrial broadband radiometers, as stipulated by the internationally accepted Système International (SI). This internationally recognized measurement reference is a detector-based standard maintained by a group of electrically self-calibrating absolute cavity pyrheliometers at the World Radiation Center (WRC) by the Physical Meteorological Observatory in Davos, Switzerland. The present accepted inherent uncertainty in the WRR is ±0.3% (Finsterle 2011). All radiometer calibrations must be traceable to the WRR, but that does not mean that all radiometers are calibrated directly against the WRR. The calibration chain from the WRR to a field instrument can have several steps. For example, reference ACRs are used as national and institutional standards, and these instruments are calibrated by comparison to the WRR during international pyrheliometer comparisons conducted by the WRC once every 5 years. Pyranometers calibrated against traceable WRR reference pyrheliometers make these pyranometer calibrations traceable to the WRR.

Pyranometers can be calibrated outdoors with three different methods. One option, as described in ISO 9846, is to compare the DNI output from a reference pyrheliometer to that derived from the test pyranometer using the shade-unshade method. The successive voltages, Vunshade and Vshade, are proportional to GHI (unshaded) and DHI (shaded), respectively. Using the reference DNI and the relationship between GHI, DHI, and DNI, as described by Eq. (1-2a), the responsivity, Rs, of the pyranometer under test for one measurement sequence can be derived:

![]()

This method is described in more detail by Reda, Stoffel, and Myers (2003).

For this calibration method, virtually constant atmospheric conditions during the pair of shaded and unshaded measurements are required. Cloud cover must be very low, and the angular distance between clouds and the sun must be high. In addition to cloud cover, aerosol and water vapor variations could affect the calibration. This explains why only data collected for a low TL (less than 6) should be used for the calibration.

Another option offered by ISO 9846 consists of comparing the voltage signal of the test pyranometer obtained in the GHI measurement position to the GHI calculated from the DNI and DHI measurements of a reference pyrheliometer and a shaded reference pyranometer. The Rs of a pyranometer under calibration for one simultaneous set of three measurements can be computed from their unshaded signal (Vunshaded):

![]()

Computing the Rs this way is called the “component-summation calibration technique.” Again, TL should be less than 6, and a high angular distance of clouds from the sun should exist during the whole calibration period.

The third option to calibrate pyranometers outdoors is described in ISO 9847. It compares a test pyranometer to a reference pyranometer while both sensors are in the same measurement position (either GHI or GTI). The Rsi is then obtained as the ratio of the test signal to the reference irradiance. For outdoor pyranometer calibrations using a reference pyranometer (ISO 1992), the sky conditions are less precisely defined than for the other methods described. The calibration interval is adjusted depending on the sky conditions.

The indoor calibration methods from ISO 9847 use irradiance measurements under an artificial light source. For the first option, measurements are taken simultaneously after ensuring that the test and the reference pyranometer receive the same irradiance from an integrating sphere. This is done by switching pyranometer positions during the calibration procedure. The other option is to take consecutive measurements by mounting the test and the reference instrument one after the other in the same position under a direct beam. The indoor calibrations are carried out in a controlled environment that is independent from external meteorological conditions. If measurements with the reference and test pyranometer are made after each other, however, instabilities of the artificial light source increase the calibration uncertainty compared to outdoor calibrations. If simultaneous measurements are used, an additional uncertainty contribution comes from the fact that the test and the reference pyranometer might not receive exactly the same irradiance from the artificial light source, though some of this error can be mitigated by switching the positions of the instruments during the calibration procedure. Further, the incident angle of the radiation is usually not well defined for indoor calibrations. Because of the pyranometer’s directional errors (see Table 2-3), this is another source of calibration uncertainty; therefore, in general, thorough outdoor calibrations with accurate reference instruments have lower uncertainties than indoor calibrations.

The shade/unshade and component summation techniques, when conducted throughout a range of SZA, show that pyranometer responsivities are correlated with it. The variation of Rs as a function of SZA is like a fingerprint or signature of each individual pyranometer (Figure 2-14).

This means that the angular responsivities of different specimens of the same model can differ. Variations of pyranometer Rs can be symmetrical with respect to solar noon, or they can be highly skewed, depending on the mechanical alignment of the pyranometer, detector surface structure, and detector absorber material properties. To improve the accuracy in the GHI measurement, using an SZA and azimuth angle-dependent calibration factor for each individual measurement are recommended. This method, however, is applicable only to conditions with high direct radiation contribution to the GHI because the variation of responsivity with SZA is mostly caused by direct radiation and the associated cosine error. For situations when thick clouds mask the sun or for DHI measurements, the angular distribution of the incoming irradiance cannot be approximated well by one incidence angle. For DHI measurements, it is recommended to use the Rs for a 45° incidence angle.

For accurate photodiode pyranometer calibration, further considerations beyond these standards are necessary because of the uneven spectral response. A specific calibration method is discussed in Section 2.2.5 for RSI instruments.

2.2.4 Correction Functions for Systematic Errors of Radiometers

Some pyrheliometer and pyranometer measurement errors are systematic and can be reduced by applying correction functions. An example is the correction of the directional errors, as mentioned. Some manufacturers provide one calibration constant for a pyranometer and additional correction factors for different intervals of SZA. This treatment of the incidence angle dependence has the same effect as using an incidence-angle-dependent responsivity.

Moreover, an additional temperature correction can be applied if the internal temperature of pyranometers or pyrheliometers is measured using a temperature-dependent resistor close to the sensor. Correction coefficients are often supplied by the manufacturer.

Measurements from only black (as opposed to black-and-white) thermoelectric pyranometers can be corrected for the expected thermal offset using additional measurements from pyrgeometers (Figure 2-4, right). Pyrgeometers allow for the determination of the downward longwave irradiance between approximately 4.5–40 µm, based on their sensor (thermopile) signal and body temperature. The thermopile is positioned below an opaque window that is transparent only to the specified infrared radiation wavelength range while excluding all visible, near- infrared, and far-infrared radiation. Most pyrgeometers must be positioned below a shading ball or disk to limit window heating by DNI. Ventilation units are also used for pyrgeometers, as in the case of pyranometers. If no pyrgeometer is available, a less accurate correction for the thermal offset can be made based on estimations of the thermal offset from the typically negative measurements collected during the night (Dutton et al. 2000; Gueymard and Myers 2009).

Correction functions for photodiode pyranometers are presented in Section 2.2.5.2.

2.2.5 Systems for Determining Solar Irradiance Components

A measurement system that independently measures the basic solar components—GHI, DNI, and DHI—will produce data with the lowest uncertainty if the instruments are properly installed and maintained. Alternatives exist to reduce the overall cost of such a system while offering potentially acceptable data accuracies, depending on the application. These alternatives are designed to eliminate the expense and complexity of an automatic solar tracker with pyrheliometer and shaded pyranometer.

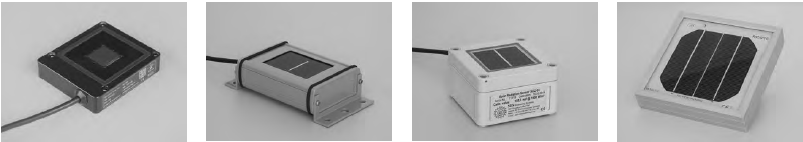

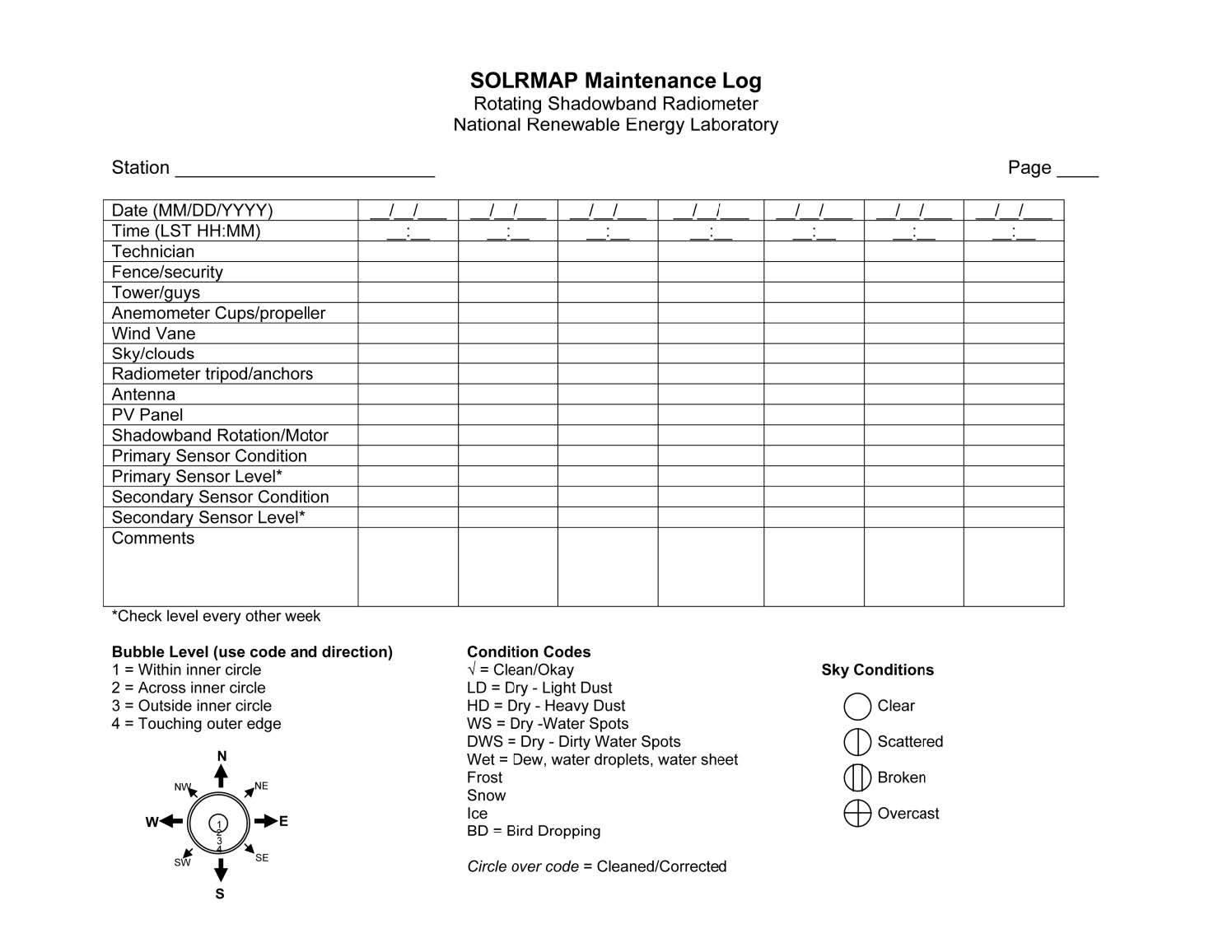

2.2.5.1 Rotating Shadowband Irradiometers

RSIs use a fast detector that is periodically shaded by a motorized shadowband, which rapidly sweeps back and forth across the detector’s FOV (Figure 3-15). The principle of operation of these RSIs is to measure GHI when unshaded and DHI when shaded. The DNI is calculated using the fundamental closure equation relating these three components, Eq. (1-2a):

![]()

RSIs are often called rotating shadowband radiometers (RSRs) or rotating shadowband pyranometers (RSPs), depending on the instrument manufacturer. RSI refers to all such instruments measuring irradiance by use of a rotating shadowband. There are two types of RSIs: RSIs with continuous rotation and RSIs with discontinuous rotation.

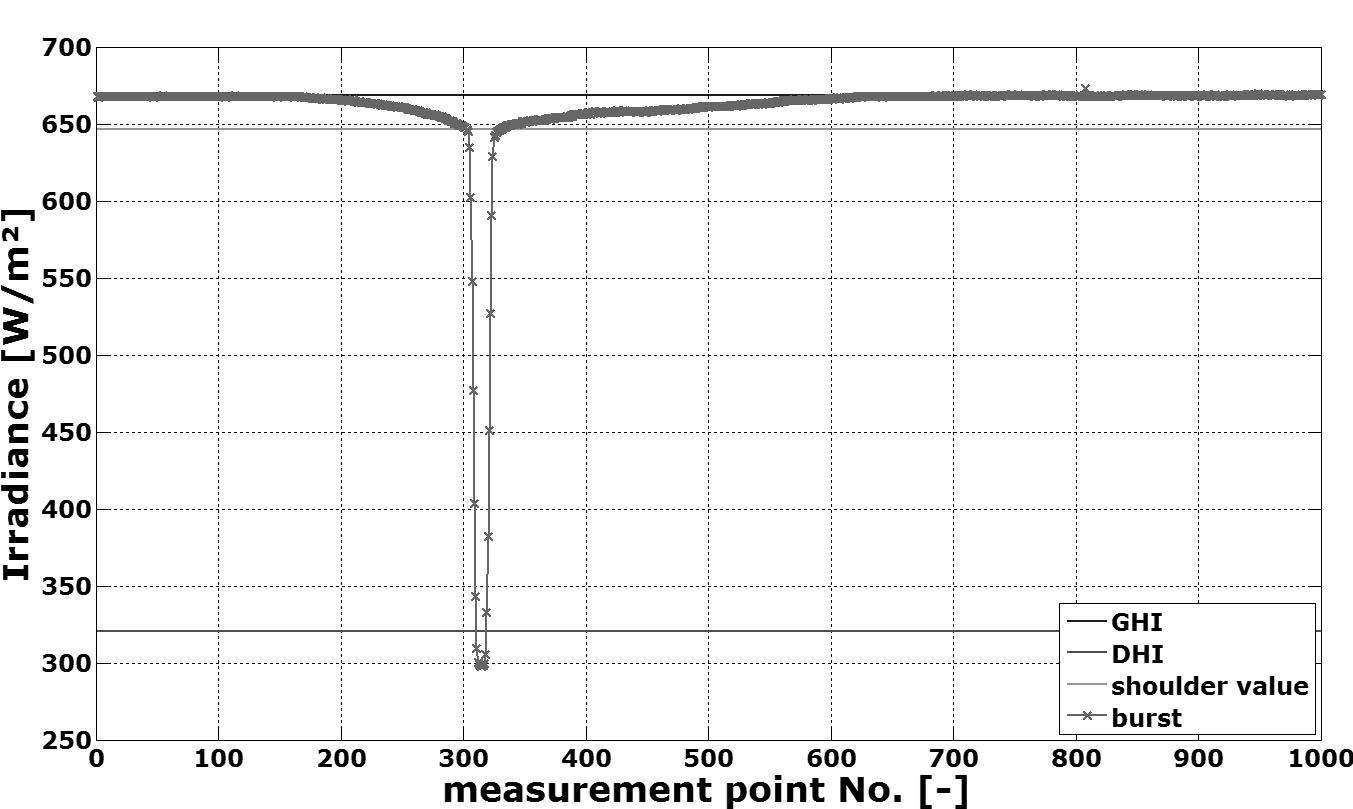

The operational principle of RSIs with continuous rotation is shown in Figure 2-16. At the beginning of each rotation cycle, the shadowband is below the pyranometer in its rest position. The rotation is performed with constant angular velocity and takes approximately 1 second. During the rotation, the irradiance is measured with a high and constant sampling rate

(approximately 1 kHz). This measurement is called a burst or sweep. At the beginning of the rotation, the pyranometer measures GHI. The moment the center of the shadow falls on the center of the sensor, it approximately detects DHI; however, the shadowband covers some portion of the sky, so the minimum of the burst is less than DHI. Thus, so-called shoulder values are determined by curve analysis algorithms. Such algorithms are usually implemented in the data logger program and use the maximum of the absolute value of the burst’s slope to find the position of the “shoulder values.” The difference between GHI and the average of the two shoulder values is added to the minimum of the curve to obtain the actual DHI. Subsequently, DNI is calculated by the data logger using GHI, DHI, and the SZA calculated by the known time and coordinates of the location, as stated. All the RSIs shown in Figure 2-15 (except for the SDR-1 model) work with a continuous rotation.

RSIs with discontinuous rotation do not measure the complete burst but only four points of it. First, the GHI is measured while the shadowband is in the rest position. Then the shadowband rotates from the rest position toward the position just before it begins shading the diffuser, stops, and a measurement is taken (e.g., during 1 second for the SDR-1 shown in Figure 2-15). Then it continues the rotation toward the position at which the shadow lies centered on the diffuser, and another measurement is taken. The last point is measured in a position at which the shadow has just passed the diffuser. The measurement with the completely shaded diffuser is used equivalently to the minimum of the burst, as shown in Figure 2-16. The two measurements for which the shadow is close to the diffuser are used equivalently to the shoulder values to correct for the portion of the sky blocked by the shadowband.

These two types of RSIs have advantages and disadvantages. An RSI with continuous rotation needs a detector with a fast response time (much less than 1 second—e.g., approximately 10 µs). Because thermopile sensors cannot be used, photodiodes are used instead—typically using Si. An example is the Si-based radiometer model LI-200SA shown in Figure 2-11. Because of the nonhomogeneous spectral response of such Si sensors (see Figure 2-2), the measurement accuracy of highest class thermopile pyranometers cannot be reached. Correction functions for this and other systematic errors must be applied to reach the accuracy required in resource assessments, albeit still not on par with the accuracy of thermopile instruments. These correction functions are discussed in Section 2.2.5.2.

RSIs with discontinuous rotation need sufficiently long measurement times for each of the four points to allow the use of a thermopile detector (e.g., the Yankee TSR-1 thermopile shadowband radiometer, now discontinued); thus, the spectral error of a photodiode can be avoided—at least partly. So far, RSIs with discontinuous rotation typically rely on a diffuser, which has its own uneven spectral transmittance over the shortwave spectrum; hence, the spectral error of such RSIs cannot be neglected. Further, the discontinuous rotation is connected to other disadvantages compared to the continuous rotation. Although RSIs with continuous rotation are not affected by small azimuth alignment errors (within approximately ±5°), the azimuth alignment of RSIs with discontinuous rotation is crucial for their accuracy. Moreover, the accuracy of the sensor’s coordinates and sweep time is more important for the discontinuous rotation. If the shadowband stops in the wrong position, the DHI measurement is incorrect. Further, the duration of the measurement with a discontinuous rotation increases the measurement uncertainty. This is especially relevant if the RSI uses a thermopile sensor and if sky conditions are not stable (e.g., cloud passages). If GHI and the sky radiance distribution change during the four-point measurement, the data used to determine DHI will be inconsistent. In contrast, this complication is less relevant for continuously rotating RSIs because their rotation takes approximately only 1 second.

DHI is typically determined one or four times per minute, but GHI measurements can be sampled at a higher frequency whenever the shadowband does not rotate—for example, every second. The temporal variation of GHI also contains some information about any concomitant change in DNI. Different algorithms are used to determine the averages of DHI and DNI between two DHI measurements using the more frequent GHI measurements. Temporal variation detected by the higher frequency GHI measurement can be used to trigger an additional sweep of the shadowband to update the DHI measurement under rapidly changing sky conditions.

The initial lower accuracy of RSIs compared to ISO 9060 first-class pyrheliometers and secondary standard pyranometers is often compensated by some unique advantages of RSIs. Their simplicity/robustness, low soiling susceptibility (Pape et al. 2009; Geuder and Quaschning 2006; Maxwell et al. 1999), low power demand, and comparatively lower cost (instrumentation and O&M) provide significant advantages compared to thermopile sensors and solar trackers, at least when operated under the measurement conditions of remote weather stations, where power and daily maintenance requirements are more difficult and costly to fulfill.

With neither correction of the systematic deviations nor a matched calibration method, under the best field circumstances RSIs yield an uncertainty of only 5%–10%. This accuracy is notably improved, to approximately 2%–3%, with proper calibration and the application of advanced correction functions (Wilbert et al. 2016), which are described in the following sections. Most instrument providers also offer post-processing software or services that include these correction functions. Users should ask the manufacturer whether such post-processing is part of the instrument package and is readily available.

Because of the stated disadvantages of RSIs with discontinuous rotation and the higher relevance of RSIs with continuous rotation for solar energy applications, the focus here is on RSIs with Si photodiodes and continuous rotation. More information about RSIs with discontinuous rotation can be found in Harrison, Michalsky, and Berndt (1994).

2.2.5.2 Correction Functions for Rotating Shadowband Irradiometers

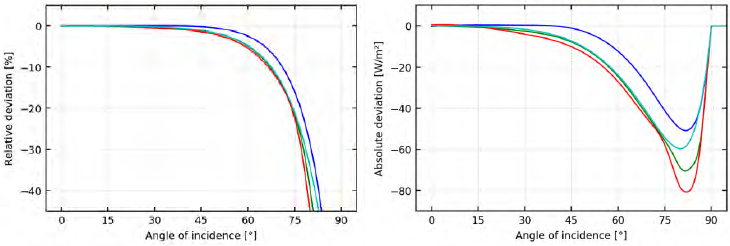

The main systematic errors of RSIs with photodiode sensors are caused by the spectral response of the detector, its cosine response, and its temperature dependence.

Several research groups have developed correction functions that reduce systematic errors in RSI readings. In all cases, the photodiode of the RSI is a LICOR LI-200SA. Whereas temperature correction is similar in all versions (King and Myers 1997; Geuder, Pulvermüller, and Vorbrügg 2008), the methods for the spectral and cosine corrections vary.

Alados-Arboledas, Batlles, and Olmo (1995) used tabular factors for different sky clearness and skylight brightness parameters as well as a functional correction depending on SZA. King and Myers (1997) proposed functional corrections in dependence on air mass and SZA, primarily targeting GHI. This approach was further developed by Augustyn et al. (2002) and Vignola

(2006), including diffuse and subsequently direct beam irradiance. The combination of the GHI correction of Augustyn et al. (2002) and of the diffuse correction from Vignola (2006) provides a complete set of corrections for LI-200SA-based RSIs. Independently, a method for DNI, GHI, and DHI correction was developed by the German Aerospace Agency, Deutsches Zentrum für Luft- und Raumfahrt (DLR), using functional corrections that include a particular spectral parameter obtained from GHI, DHI, and DNI (Geuder, Pulvermüller, and Vorbrügg 2008). Additional corrections in dependence on air mass and SZA were used. Another set of correction functions was later presented in Geuder et al. (2011). Additional new correction methods are on their way (Vignola et al. 2017; 2019; Forstinger et al 2020). An overview of RSI correction functions can be found in Jessen et al. (2017).

2.2.5.3 Calibration Methods for Rotating Shadowband Irradiometers

In addition to the corrections mentioned, special calibration techniques are required for RSIs. As of this writing, RSIs with continuous rotation are equipped with LI-200SA or LI-200R pyranometers. They come with precalibration values from the manufacturer (LI-COR) for GHI based on outdoor comparisons with an Eppley precision spectral pyranometer (PSP) with an accuracy stated as better than 5% (LI-COR Biosciences 2005). Considering that the PSP has only limited performance (Gueymard and Myers 2009), an additional calibration (e.g., on-site or with respect to DHI, DNI, or GHI independently) of the RSIs can noticeably improve their accuracy (Wilbert et al. 2016).